In light of the recent tragedy in Newtown, Connecticut, the debate over access to firearms has again been thrust to the fore of our national consciousness. With the resurgence of this debate, the classic “guns don’t kill people” line of argument will inevitably feature prominently in radio conversations, TV interviews, Facebook posts, and tweets. The “guns don’t kill people” trope is part of a larger pattern in how our society frames the relationship between technology and (lack of) collective responsibility.

In light of the recent tragedy in Newtown, Connecticut, the debate over access to firearms has again been thrust to the fore of our national consciousness. With the resurgence of this debate, the classic “guns don’t kill people” line of argument will inevitably feature prominently in radio conversations, TV interviews, Facebook posts, and tweets. The “guns don’t kill people” trope is part of a larger pattern in how our society frames the relationship between technology and (lack of) collective responsibility.

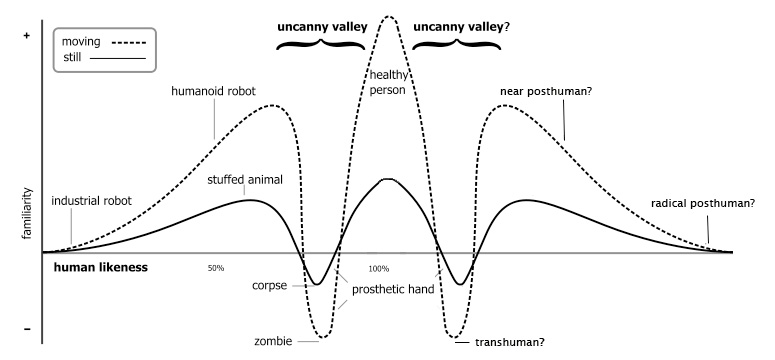

“Guns don’t kill people” is a spin on the broader “technology is neutral” trope–still widely-embraced by Silicon Valley–whose function is to absolve the creators of technology from any responsibility for the consequences of what they have designed. The “technology is neutral” trope has long be subject to criticism. From Frankenstein’s monster turning on its creator to Robert Oppenheimer’s own reflections on creating the bomb, Western civilization has wrestled with the question of where responsibility resides in atrocities facilitated by technology, and we, on occasion, are reminded that the choice of what to research and create (or to not research and not create) is an expression of both individual and cultural values. As the great sociologist Max Weber once said, only through “naive self-deception” does a technician ignore “the evaluative ideas with which he unconsciously approaches his subject matter… that he has selected from an absolute infinity a tiny portion with the study of which he concerns himself.” Technology is never neutral because its birth–its very existence–is the product of both political forces and values-oriented decision making.