On the eve of Yom Kippur, the holiest day on the Jewish calendar, we would normally all gather at synagogue and listen to the recitation of the Kol Nidrei: a prayer, written in Aramaic (as opposed to Hebrew), wherein congregants disavow those oaths they are going to take in the coming year. Why would we do that? Seems like we might be getting ahead of ourselves if, at the start of those 25 hours during which we fast and pray in order to atone for those sins which we have committed the year before, we’re already swearing off the promises we’re about to make.

The answer comes in Judaism’s unfortunately strong familiarity with persecution and diaspora—the prayer is said to have been written by those Jews being forced to pray to another god under threats of torture or death:

All vows, and prohibitions, and oaths, and consecrations…that we may vow, or swear, or consecrate, or prohibit upon ourselves, from the previous Day of Atonement until this Day of Atonement and…from this Day of Atonement until the [next] Day of Atonement that will come for our benefit. Regarding all of them, we repudiate them. All of them are undone, abandoned, cancelled, null and void, not in force, and not in effect. Our vows are no longer vows, and our prohibitions are no longer prohibitions, and our oaths are no longer oaths.

Until I was doing some research for this post, I was under the impression (thanks, most likely, to a misinformed school teacher) that this prayer had been written by the Marranos—a derogatory term, literally meaning “pig” or “swine”, for Jews who were forced into Catholicism during the Spanish Inquisition—who knew they would have to make vows in the coming year which would be, in effect, transgressions against their fellow Jews and against God. As it turns out, the Kol Nidrei (literally “All Vows”) was probably written a few hundred years earlier by a different set of persecuted Jews. Go figure.

As I was growing up, I often heard about so-called (and unfortunately named) “crypto-Jews” who practiced in secret: going down to the basement on a Friday evening, for instance, to light candles to mark the start of the Sabbath, then attending mass on Sunday. The tradition has passed down family lines, even as the reason for lighting those candles has perhaps gotten lost along the way. Janet Liebman Jacobs writes:

The descendants of twentieth-century crypto-Jews living in Mexico report that the women sought a variety of means to conceal the lighting of the Sabbath candles. Among their strategies was the practice of lighting Sabbath oil lamps in a church so that no one would suspect the family of being “sabatistas.”

Kol Nidrei is my favorite prayer and so I have rarely missed attending its recitation in the past 20 years or so. In truth, I’m not a huge fan of congregational prayer—the practice is so personal to me. But there’s something about the architecture and acoustics of sanctuaries, the resonance of the cantor’s calls, and the collective understanding among my fellow congregants. Often, too, my parents are with me, having flown in for the holiday. This year, of course, was different. Instead of finishing up my fast-easing carb-heavy dinner before sundown on the night that Yom Kippur began and heading to synagogue, I went down to my basement office, prayer shawl in hand, to watch a live stream from the Central Synagogue in New York City, one of the few free streams from the sort of congregation with which I prefer to pray.

Yom Kippur for me usually features 25 hours of no devices—no TV, no phone, no radio. I don’t do work. I don’t drive if I can avoid it. I don’t use money. It’s a very real privilege to be able to do this and not one I take lightly. Reform Jewish congregations allow for musical accompaniment and so the service began with a cello solo, a solemn and slow performance that my congregation back in San Diego featured as well. During those few minutes before the cantor begins reciting Kol Nidrei, I attempt to recenter, to bring myself into the moment and shut out the rest of my world. This is much easier when there aren’t glowing screens (my laptop for the live-stream and my iPad for the prayer book) in front of me.

I cried a lot during the next 25 hours until breaking fast with my family upstairs in the kitchen. After Kol Nidrei was recited in full all three times (a tradition meant to accommodate late-comers), the rabbi, Rabbi Angela Buchdahl, gave a stirring and emotional sermon about systemic racism in Judaism and its roots in eugenics (total mind-explosion at a rabbi preaching some STS gospel) and I sobbed, exhausted, overwhelmed, and alone in a darkened basement room. What a fucking year.

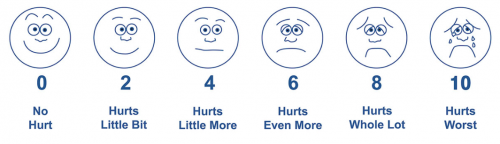

So often on this blog I both read and write about the ways that techno-determinism and dualism lead to demonizing technologies that can actually help us focus, help us connect, and help us recenter. And so here I was, standing in the same space from which I teach my classes, connecting to my religion through devices which I would have normally sworn off and I was distracted: was the screen bright enough? Too bright? Was there any way to get the PDF software to accommodate a file that read right-to-left? Was my monitor at the right height? Would I be able to stare at this set-up for the next day without exacerbating what is already a physically taxing experience?

This was also supposed to be my son’s first Yom Kippur. Even if his 11-month self wouldn’t remember the occasion, I would. But we had sworn off screens for him until his second birthday, a rule we’ve had to bend severely so that his family in cities across the US can see him “live” as he has begun to crawl, climb, babble, and laugh with his whole belly, as only an infant can. My wife offered to bring him to watch the Kol Nidrei screen with me. I resisted. It didn’t feel right.

When my students come to me with arguments about how “technology is bad”—for our children, for our health, for our relationships, etc.—I ask them to consider the larger systemic powers at work. What would I say here? Why was I being forced into my basement, by myself, to practice a sort of Judaism I never asked for? Sure, I suppose I could have gone to one of the few open congregations in my neighborhood, but at the risk of illness or death. Would the Jews who prayed in person consider me a transgressor of Orthodox Judaism’s rules against the use of electronics on holy days and the Sabbath? Would they consider me a Marrano? A pig? I was doing my best. I was practicing one of the highest commandments in Judaism—pikuach nefesh, transgressing in order to save a life.

Or is it I who consider myself the transgressor of my own rules? How do I resolve the struggle between my ideologies around technology and my ideologies around my religious practice? Have I done my son a disservice by withholding this experience in the name of “avoiding screentime”?

When I started writing this, I had hoped to perhaps unpack some of the similarities and differences between the experiences of the Crypto-Jews and Jews of the pandemic. I think that goes beyond the scope of the post, but I want to close with a quote from one of Jacob’s Crypto-Jewish research subjects

On Friday evenings my grandmother would change all her beds. The house had to be clean. She had a small table in her bedroom with two candles, one on each side. Every Friday evening she would light them, and she would not allow anyone in her bedroom except for me…. And she would say some prayers in words that I did not understand.

Jacobs presents the grandmother’s bedroom here as an example of an “invisible” space of resistance. I want to think about my basement that night as a similar space—one wherein, thanks to a technologically mediated connectivity, I could feel my closeness to my religion during a time when Jews and other marginalized people are under direct threat from fascist regimes.