The people are composing the communal and passionate identity of a patriotic nation under military siege.

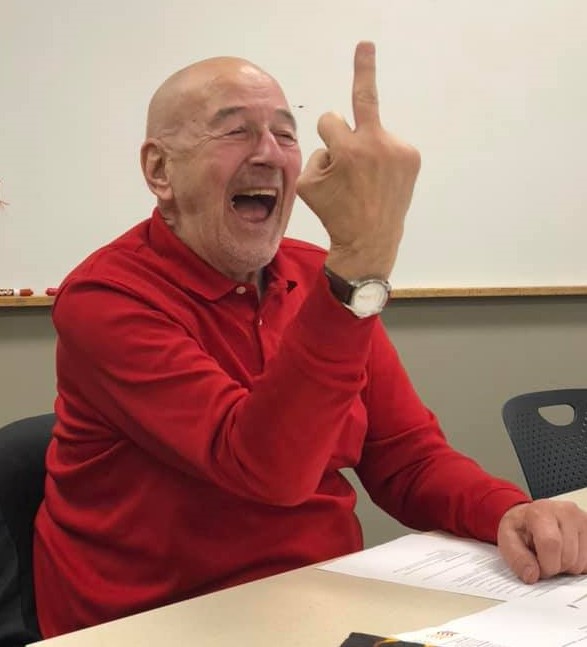

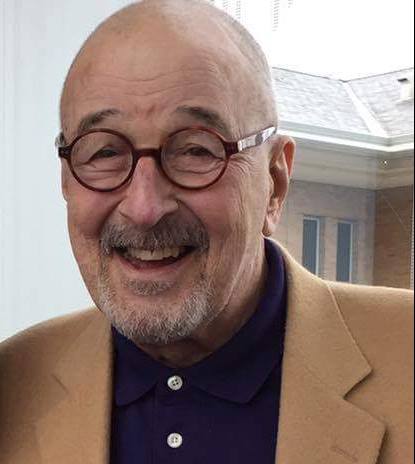

By MONTE BUTE

March 16, 2022, Minneapolis Star Tribune

ATTACK ON UKRAINE

Power is typically defined from the perspective of the powerful, those who issue commands. Disobedience is forbidden. I offer a view from below — power from the vantage point of those expected to obey those commands.

Responding to an unprovoked Russian blitzkrieg of their country, the Ukrainian resistance has discovered what Elizabeth Janeway called the “powers of the weak.” Solidarity is the great equalizer.

It is a simple truth that I learned during my 55 years as a grassroots organizer and 38 years as a sociologist.I have confirmed its truthfulness experientially.I have been a participant-observer in a wide range of social movements and, most of all, in the mysterious and enchanting spaces of collective effervescence that I periodically stumbled upon. Mahatma Gandhi recounts using this method in An Autobiography: The Story of My Experiments with Truth.

For me, one sociological insight stands above all others: Authorities, in all times and in all places, are empowered to write our scripts for us. Resist. Resist with all your powers. Our life stories are a series of existential choices. Our paths are never clear amid living, but we still must make each choice as wisely as we can. As someone once said, “We make the road by walking.”

Whenever possible, walk with kindred spirits. It is only at the end of the journey that we will know what our destiny has been.

If the above aphorism sparks your own seeds of fire, share it now with others freely so that they too can bond together in setting metaphorical prairie fires that might, against all hope, save our imperiled species and planet. The people of Ukraine are serving as exemplars who are setting a moral prairie fire that is engulfing the globe; they are schooling the world’s democracies about the meaning of esprit de corps.

Ukrainians are embroiled in a life-changing, historical moment. These episodes are relatively rare in human history, but when they happen, participants are ripped out of their daily lives, and they begin fermenting together for a time. It may be an extended interlude or an ongoing series of transformative chapters.

Individuals experience these emancipatory occasions, yet the transformations are not solitary events.

They emerge in a dimension that the theologian and social philosopher Martin Buber called the “between.”

“On the narrow ridge,” he writes, “where I and Thou meet, there is the realm of ‘between.’ ” Buber continues, “This reality … the knowledge of which will help bring about the genuine person again and to establish genuine community.”

Bonding together in the between is what Emile Durkheim meant by collective effervescence. Resistance movements brew an intoxicating collective consciousness — a social champagne, so to speak — that empowers its participants. Despite nonstop images of suffering, demolition and death, we are also witnessing President Volodymyr Zelenskyy and the citizens of Ukraine composing the communal and passionate identity of a patriotic nation under military siege.

In a silent, meaningless universe (at least for humans), all we have is each other. Our only meaning comes from creating what Martin Luther King Jr. called beloved communities, which provide us shelter from the storm and promote collective effervescence — bread and roses.

During a barbaric invasion of indiscriminate death and destruction, Ukraine’s resistance is forging a beloved community. As Albert Camus put it, “I rebel, therefore we exist.”

Monte Bute is a professor emeritus of sociology at Metropolitan State University in St. Paul.