The presence of white nationalism has been well explored in the run-up to this election, with the alt-right breaking onto the global stage of mainstream media publications. Yet there has been little consideration of the theory of ‘white genocide’ – a recent George Washington study on the Twitter lives of white nationalism and ISIS found that in the case of both Nazis and other white nationalists, white genocide was the 10th most popular hashtag. Whilst it seems unusual for white nationalists and neo-Nazis to place themselves in the position of weakness, the concept of white genocide is not new. Its dissemination, however, reveals the disturbing dangers of the narrative conventions of the hashtag.

The presence of white nationalism has been well explored in the run-up to this election, with the alt-right breaking onto the global stage of mainstream media publications. Yet there has been little consideration of the theory of ‘white genocide’ – a recent George Washington study on the Twitter lives of white nationalism and ISIS found that in the case of both Nazis and other white nationalists, white genocide was the 10th most popular hashtag. Whilst it seems unusual for white nationalists and neo-Nazis to place themselves in the position of weakness, the concept of white genocide is not new. Its dissemination, however, reveals the disturbing dangers of the narrative conventions of the hashtag.

The concept of white genocide is that multiculturalism and non-white immigration are part of a conspiracy to eradicate whites through miscegenation or state-sanctioned murders. Its antecedent in the white nationalist movement is the ‘14 words’ of David Lane that “We must secure the existence of our people and a future for white children”. However, it also draws on a far older fear of colonisation, similar to that identified by Michelle Warren in Welsh Arthuriana in History on the Edge. She sees the heroic figure of Arthur assuming three particular political cadences – the myth of a glorious past, defeat at the hands of outside enemies and internal traitors, and a prophecy of a return to prior strength; all of these can be detected in white nationalist dogma.

Whilst the ideology is not new, the use of the hashtag #whitegenocide has been a novel and pivotal method of dissemination and legitimisation. Where white nationalist theories had previously been relegated to fringe websites, Twitter allows for easy access to a non-nationalist audience, and grants de facto legitimacy-via-publicity. Broadly speaking, there are two main focuses of #whitegenocide tweets: the ‘defensive’ (the myth of the glorious past) and ‘offensive’ (threats from the Other and from traitors). Taken together, the thousands of tweets with the white genocide hashtag present a narrative far more compelling than traditional white nationalist polemic by dint of its size and multiple narrators.

As Michelle Warren writes, to be colonised is to have boundaries rewritten. In claiming to be subaltern, white genocide theorists shift boundaries so that affiliation is based around ethnicity and a supposedly shared culture instead of national peculiarities (an idea which some members of the alt-right describe as ethno-nationalism). This allows for defensive tweets which uphold broad values of whiteness, through references to the “Pure beauty” of white women and a general emphasis upon genetic superiority:

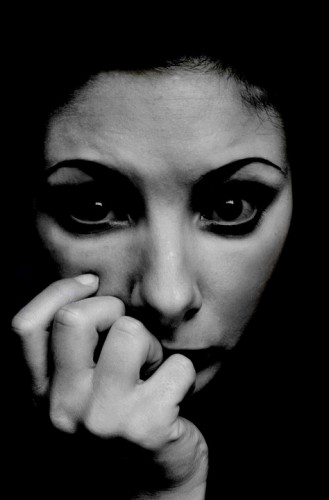

Pure beauty, like pure water, needs to be protected for future generations from all who want to taint, erase, or replace it. #WhiteGenocide pic.twitter.com/kFxknbEYum

— ✠ Oli Nor ✠ (@OliNor2016) September 19, 2016

Diversity makes everything worse. Whites can do far better on our own. #WhiteGenocide pic.twitter.com/I9eNgxeudd #sociology

— Pro-Whites ? (@ProWhites) September 17, 2016

In contrast to this is the offensive focus: the threat of the Other and the traitor. In part, the power structures described resemble Ranajit Guha’s stratification grid. Jews are presented as a “dominant foreign group”, with their foreignness – and specifically anti-whiteness – emphasized. They are cast overwhelmingly as the architects of white genocide.

#AltRightMeans naming the Jew. pic.twitter.com/DeWdeX49Gn

— Tor Odinssønn (@TorOdinssonn) August 25, 2016

By comparison with Guha, however, white genocide theorists also make claims for what can be described as a “subservient foreign group” – usually people of African origin, but more recently Muslim refugees. They are typified as brutes, deployed as part of a Jewish conspiracy to ‘destroy the white race’, either through physical violence or through miscegenation. They are characterised by their interactions with the white population (rapefugee has also become a buzzword amongst the alt-right).

These White girls were raped & abused because Western governments let in muslims #UN4RefugeesMigrants #WhiteGenocide pic.twitter.com/W5jwlYcKWx

— Ann Kelly ? (@LadyAodh) September 19, 2016

And then, beneath them, there are the “dominant indigenous groups” at both a national and local level. These are liberals and ‘cuckservatives’ – turncoats who betrayed their race for political gain. The term cuckservative, borrowed from pornography, is deeply telling: the imagery of a Euro-American male allowing a black man to dominate his white wife is more than a little reminiscent of David Lane’s concern for the future of white children. Although used more often as an insult by itself, the idea of the cuckservative as race traitor does have some currency, as shown in an attack on Ted Cruz.

Ted Cruz The #Cuck ing stops here. "Diversity" is a scam to get rid of Whites #Whitegenocide pic.twitter.com/2zgNQ6TolL

— WhiteGenocideReality (@WhiteGenociders) September 23, 2016

The power of the hashtag is to bind together disparate strands so as to suggest a coherent narrative. A search for #whitegenocide immediately reveals dozens of claims of Jewish conspiracies, attacks on white women and girls, and treacherous politicians. None of these are particularly unique to 2016; what is, however, is that they have been combined into a single story through Twitter. The result is a compelling narrative for those who want to believe in the apparent horrors of non-whites, subverting the real grievances of minority groups by turning them into the monstrous Other. White nationalists are self-designated subalterns (re)writing their own history and that of those around them via a zone which supposedly supports free speech. In reality, the sheer xenophobia and hatred of the white genocide narrative effectively occludes dissenting voices – often through threats of violence.

Siddharth (Sid) Venkataramakrishnan is a reporter at Columbia Journalism School and contributor at The Daily Telegraph’s education section. He previously read English Language and Literature at Oxford, specialising in medieval vernaculars, and completed his dissertation in intersectionality in early cyberpunk. He can be found @SVR13.

Headline Pic Via: Source

.jpg)