During the week of July 12, 2004, a group of scholars gathered at Stanford University, as one participant reported, “to discuss current affairs in a leisurely way with [Stanford emeritus professor] René Girard.” The proceedings were later published as the book Politics and Apocalypse. At first glance, the symposium resembled many others held at American universities in the early 2000s: the talks proceeded from the premise that “the events of Sept. 11, 2001 demand a reexamination of the foundations of modern politics.” The speakers enlisted various theoretical perspectives to facilitate that reexamination, with a focus on how the religious concept of apocalypse might illuminate the secular crisis of the post-9/11 world.

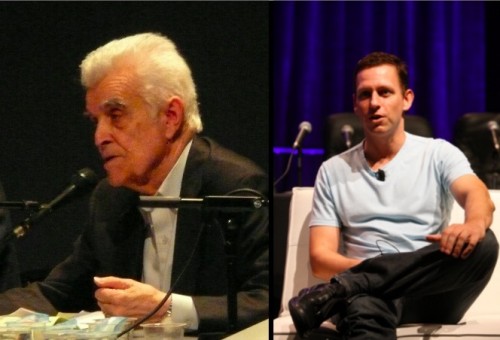

As one examines the list of participants, one name stands out: Peter Thiel, not, like the rest, a university professor, but (at the time) the President of Clarium Capital. In 2011, the New Yorker called Thiel “the world’s most successful technology investor”; he has also been described, admiringly, as a “philosopher-CEO.” More recently, Thiel has been at the center of a media firestorm for his role in bankrolling Hulk Hogan’s lawsuit against Gawker, which outed Thiel as gay in 2007 and whose journalists he has described as “terrorists.” He has also garnered some headlines for standing as a delegate for Donald Trump, whose strongman populism seems an odd fit for Thiel’s highbrow libertarianism; he recently reinforced his support for Trump with a speech at the Republican National Convention. Both episodes reflect Thiel’s longstanding conviction that Silicon Valley entrepreneurs should use their wealth to exercise power and reshape society. But to what ends? Thiel’s participation in the 2004 Stanford symposium offers some clues.

Thiel’s connection to the late René Girard, his former teacher at Stanford, is well known but poorly understood. Most accounts of the Girard-Thiel connection have described the common ground between them as “conservatism,” but this oversimplifies the matter. Girard, a French Catholic pacifist, would have likely found little common ground with most Trump delegates. While aspects of his thinking could be described as conservative, he also described himself as an advocate of “a more reasonable, renewed ideology of liberalism and progress.” Nevertheless, as the Politics and Apocalypse symposium reveals, Thiel and Girard both believe that “Western political philosophy can no longer cope with our world of global violence.” “The Straussian Moment,” Thiel’s contribution to the conference, seeks common ground between Girard’s mimetic theory of human social life – to which I will return shortly – and the work of two right-wing, anti-democratic political philosophers who were in vogue in the years following 9/11: Leo Strauss, a cult figure in some conservative circles, and a guru to some members of the Bush administration; and Carl Schmitt, a onetime Nazi who has nevertheless been influential among academics of both the right and the left. Thiel notes that Girard, Strauss, and Schmitt, despite various differences, share a conviction that “the whole issue of human violence has been whitewashed away by the Enlightenment.” His dense and wide-ranging essay draws from their writings an analysis of the failure of modern secular politics to contend with the foundational role of violence in the social order.

Thiel’s intellectual debt to Girard’s theories has a surprising relevance to some of his most prominent investments. For anyone who has followed Thiel’s career, the summer of 2004 – the summer when the “Politics and Apocalypse” symposium at Stanford took place – should be a familiar period. About a month afterward, in August, Thiel made his crucial $500,000 angel investment in Facebook, the first outside funding for what was then a little-known startup. In most accounts of Facebook’s breakthrough from dormroom project to social media empire (including that offered by the film The Social Network), Thiel plays a decisive role: a well-connected tech industry figure, he provided Zuckerberg et al, then Silicon Valley newcomers, with credibility as well as cash at a key juncture. What made Thiel see the potential of Facebook before anyone else? We find his answer in an obituary for René Girard (who died in November 2015), which reports that Thiel “credits Girard with inspiring him to switch careers and become an early, and well-rewarded, investor in Facebook.” It was the French academic’s mimetic theory, he claims, that allowed him to foresee the company’s success: “[Thiel] gave Facebook its first $500,000 investment, he said, because he saw Professor Girard’s theories being validated in the concept of social media. ‘Facebook first spread by word of mouth, and it’s about word of mouth, so it’s doubly mimetic,’ he said. ‘Social media proved to be more important than it looked, because it’s about our natures.'” On the basis of such statements, business analyst and Thiel admirer Arnaud Auger has gone so far as to call Girard “the godfather of the ‘like’ button.”

In order to make sense of how Girard informed Thiel’s investment in Facebook, but also how he has shaped Thiel’s ideas about violence, we need to examine the basic tenets of Girard’s thought. Mimetic theory has not been widely applied in social analyses of the internet, perhaps in part because Girard himself had essentially nothing to say about technology in his published oeuvre. Yet the omission is surprising given mimetic theory’s superficial resemblance to the more often discussed “meme theory,” which similarly posits imitation as the basis of culture. Meme theory began with Richard Dawkins’s The Selfish Gene, was codified in Susan Blackmore’s The Meme Machine, and has been applied broadly, in popular and scholarly contexts, to varied internet phenomena. Indeed, the traction achieved by the term “meme” has made most of us witting or unwitting adopters of meme theory. Yet as Matthew Taylor has argued, Girard’s account of mimeticism has significant theoretical advantages over Dawkins-derived meme theory, at least for anyone interested in making sense of the socio-political dimensions of technology. Meme theory tends to reify memes, separating them from the social contexts in which their circulation is embedded. Girard, in contrast, situates imitative behaviors within a general social theory of desire.

Girard’s theory of mimetic desire is simple in its basic framework but has permitted complex, detailed analyses of a wide range of cultural and social phenomena. For Girard, what distinguishes desire from instinct is its mediated form: put simply, we desire things because others desire them. There is some continuity with familiar strands of psychoanalytic theory here. I quote, for example, from Slavoj Žižek: “The problem is, how do we know what we desire? There is nothing spontaneous, nothing natural, about human desires. Our desires are artificial. We have to be taught to desire.” Compare this with Girard’s statement: “Man is the creature who does not know what to desire, and who turns to others in order to make up his mind. We desire what others desire because we imitate their desires.” For Girard (and here he differs from psychoanalysis), mimesis is the process by which we learn how and what to desire. Any subject’s desire, he argues, is based on that of another subject who functions as a model, or “mediator.” Hence, as he first asserted in his book Deceit, Desire, and the Novel, the structure of desire is triangular, incorporating not only a subject and an object, but also, and more crucially, another subject who models any subject’s desire. Moreover, for Girard, the relation to the object of desire is secondary to the relation between the two desiring subjects – which can eclipse the object, reducing it to the status of a prop or pretext.

The possible applications of this thinking to social media in particular should be relatively obvious. The structures of social platforms mediate the presentation of objects: that is, all “objects” appear embedded in, and placed in relation to, visible signals of the other’s desire (likes, up-votes, reblogs, retweets, comments, etc.). The accumulation of such signals, in turn, renders objects more visible: the more mediated through the other’s desire (that is, the more liked, retweeted, reblogged, etc.), the more prominent a post or tweet becomes on one’s feed, and hence the more desirable. Desire begets desire, much in the manner that Girard describes. Moreover, social media platforms perpetually enjoin users, through various means, to enter the iterative chain of mimesis: to signal their desires to other users, eliciting further desires in the process. The algorithms driving social media, as it turns out, are programmed on mimetic principles.

Yet it is not simply that the signaling of desire (for example, by liking a post) happens to produce relations with others, but that the true aim of the signaling of desire through posting, liking, commenting, etc. is to produce relations with others. This is what meme theory obscures and mimetic theory makes clear: memes, far from being autonomous replicators, as meme theory would have it, function entirely as mediators of social relations; their replication relies entirely on those relations. Recall that for Girard, the desire for any object is always enmeshed in social linkages, insofar as the desire only comes about in the first place through the mediation of the other. A reading of Girard’s analyses of nineteenth-century fiction or of ancient myth suggests that none of this is at all new. Social media have not, as the popular hype sometimes implies, altered the structures that underlie social relations. They merely render certain aspects of them more obvious. According to Girard, what stands in the way of the discovery of mimetic desire is not its obscurity or complexity, but the seeming triviality of the behaviors that reveal it: envy, jealousy, snobbery, copycat behavior. All are too embarrassing to seem socially, much less politically, significant. For similar reasons, to revisit Thiel’s remark, “social media proved to be more important than it looked.”

But so far, I have been expanding on what Thiel himself has said, which others have echoed. However, what accounts of Girard’s role in Thiel’s Facebook investment never mention is the other half of Girard’s theory, the half that Thiel was at Stanford to discuss in 2004: mimetic violence, which, for Girard, is the necessary corollary of mimetic desire.

Thiel invested in and promoted Facebook not simply because Girard’s theories led him to foresee the future profitability of the company, but because he saw social media as a mechanism for the containment and channeling of mimetic violence in the face of an ineffectual state. Facebook, then, was not simply a prescient and well-rewarded investment for Thiel, but a political act closely connected to other well-known actions, from founding the national security-oriented startup Palantir Technologies to suing Gawker and supporting Trump.

According to Girard’s mimetic theory, humans choose objects of desire through contagious imitation: we desire things because others desire them, and we model our desires on others’ desires. As a result, desires converge on the same objects, and selves become rivals and doubles, struggling for the same sense of full being, which each subject suspects the other of possessing. The resulting conflicts cascade across societies because the mimetic structure of behavior also means that violence replicates itself rapidly. The entire community becomes mired in reciprocal aggression. The ancient solution to such a “mimetic crisis,” according to Girard, was sacrifice, which channeled collective violence into the murder of scapegoats, thus purging it, temporarily, from the community. While these cathartic acts of mob violence initially occurred spontaneously, as Girard argues in his book Violence and the Sacred, they later became codified in ritual, which reenacts collective violence in a controlled manner, and in myth, which recounts it in veiled forms. Religion, the sacred, and the state, for Girard, emerged out of this violent purgation of violence from the community. However, he argues, the modern era is characterized by a discrediting of the scapegoat mechanism, and therefore of sacrificial ritual, which creates a perennial problem of how to contain violence.

For Girard, to wield power is to control the mechanisms by which the mimetic violence that threatens the social order is contained, channeled, and expelled. Girard’s politics, as mentioned above, are ambiguous: he criticizes conservatism for wishing to preserve the sacrificial logic of ancient theocracies, and liberalism for believing that by dissolving religion it can eradicate the potential for violence. However, Girard’s religious commitment to a somewhat heterodox Christianity is clear, and controversial: he regards the non-violence of the Jesus of the gospel texts as a powerful exception to the violence that has been in the DNA of all human cultures, and an antidote to mimetic conflict. It is unclear to what degree Girard regards this conviction as reconcilable with an acceptance of modern secular governance, founded as it is by the state monopoly on violence. Peter Thiel, for his part, has stated that he is a Christian, but his large contributions to hawkish politicians suggest he does not share Girard’s pacifist interpretation of the Bible. His sympathetic account, in “The Straussian Moment,” of the ideas of Carl Schmitt offers further evidence of his ambivalence about Girard’s pacifism. For Schmitt, a society cannot achieve any meaningful cohesion without an “enemy” to define itself against. Schmitt and Girard both see violence as fundamental to the social order, but they draw opposite conclusions from that finding: Schmitt wants to resuscitate the scapegoat in order to maintain the state’s cohesion, while Girard wants (somehow) to put a final end to scapegoating and sacrifice. In his 2004 essay, Thiel seems torn between Girard’s pacifism and Schmitt’s bellicosity.

The tensions between Girard’s and Thiel’s worldviews run deeper, as a brief overview of Thiel’s politics reveals. As a libertarian, he has donated to both Ron and Rand Paul, and he has also supported Tea Party stalwarts including Ted Cruz. George Packer, in a 2011 profile of Thiel, reports that his chief influence in his youth was Ayn Rand, and that in political arguments in college, Thiel fondly quoted Margaret Thatcher’s claim that “there is no such thing as society.” As George Packer notes in his New Yorker profile of Thiel, few claims could be more alien to his mentor, Girard, who insists on the primacy of the collective over the individual and dedicated several books to debunking modern myths of individualism. Indeed, Thiel’s libertarian vision of the heroic entrepreneur standing apart from society closely resembles what Girard derided in his work as “the romantic lie”: the fantasy of the autonomous, self-directed individual that emerged out of European Romanticism. Girard went so far as to suggest replacing the term “individual” with the neologism “interdividual,” which better conveys the way that identity is always constructed in relation to others.

In a seemingly Ayn-Randian vein, Thiel likes to call tech entrepreneurs “founders,” and in lectures and seminars has compared startups to monarchies. He envisions “founders” in mythical terms, citing Romulus, Remus, Oedipus, and Cain, figures discussed at length in Girard’s analyses of myth. Thiel’s pro-monarchist statements have been parsed in the media (and linked to his support for the would-be autocrat Trump), but without noting that for a self-proclaimed devotee of René Girard to advocate for monarchy carries striking ambiguities. According to Girard’s counterintuitive analysis, monarchical power is the obverse side of scapegoating. Monarchy, he hypothesizes, has its origins in the role of the sacrificed scapegoat as the unifier and redeemer of the community; it developed when scapegoats managed to delay their own ritual murder and secured a fixed place at the center of a society. A king is a living scapegoat who has been deified, and can become a scapegoat again, as Girard illustrates in his reading of the myth of Oedipus (Oedipus begins as an outsider, goes on to become king, and is ultimately punished for the community’s ills, channeling collective violence toward himself, and returned to his outsider status).

If Thiel, as he reveals in a 2012 seminar, views the “founder” as both potentially a “God” and a “victim,” then he regards the broad societal influence wielded by the tech élite as a source of risk: a king can always become a scapegoat. On these grounds, it seems reasonable to conclude that Thiel’s animus against Gawker, which he has repeatedly accused of “bullying” him and other Silicon Valley power players, is closely connected to his core concern with scapegoating, derived from his longstanding engagement with Girard’s ideas. Thiel’s preoccupation with the risks faced by the “founder” also has a close connection to his hostility toward democratic politics, which he regards as placing power in the hands of a mob that will victimize those it chooses to play the role of scapegoat. Or as he states: “the 99% vs. the 1% is the modern articulation of [the] classic scapegoating mechanism: it is all minus one versus the one.”

No serious reader of Girard can regard a simple return to monarchical rule – which Thiel has sometimes seemed to favor – as plausible: the ritual underpinnings that were necessary to maintain its credibility, Girard insists, have been irreversibly demystified. Perhaps on the basis of this recognition, and even while hedging his bets through his involvement in Republican politics, Thiel has focused instead on the new possibilities offered by network technologies for the exercise of power. A Thiel text published on the website of the libertarian Cato Institute is suggestive in this context: “In the 2000s, companies like Facebook create . . . new ways to form communities not bounded by historical nation-states. By starting a new Internet business, an entrepreneur may create a new world. The hope of the Internet is that these new worlds will impact and force change on the existing social and political order.” Although Thiel does not say so here, from a Girardian point of view, a “founder” of a community does so by bringing mimetic violence under institutional control – precisely what the application of mimetic theory to Facebook would suggest that it does.

As we saw previously, Thiel was ruminating on Strauss, Schmitt, and Girard in the summer of 2004, but also on the future of social media platforms, which he found himself in a position to help shape. It is worth adding that around the same time, Thiel was involved in the founding of Palantir Technologies, a data analysis company whose main clients are the US Intelligence Community and Department of Defense – a company explicitly founded, according to Thiel, to forestall acts of destabilizing violence like 9/11. One may speculate that Thiel understood Facebook to serve a parallel function. According to his own account, he identified the new platform as a powerful conduit of mimetic desire. In Girard’s account, the original conduits of mimetic desire were religions, which channeled socially destructive, “profane” violence into sanctioned forms of socially consolidating violence. If the sacrificial and juridical superstructures designed to contain violence had reached their limits, Thiel seemed to understand social media as a new, technological means to achieving comparable ends.

If we take Girard’s mimetic theory seriously, the consequences for the way we think about social media are potentially profound. For one, it would lead us to conclude that social media platforms, by channeling mimetic desire, also serve as conduits of the violence that goes along with it. That, in turn, would suggest that abuse, harassment, and bullying – the various forms of scapegoating that have become depressing constants of online behavior – are features, not bugs: the platforms’ basic social architecture, by concentrating mimetic behavior, also stokes the tendencies toward envy, rivalry, and hatred of the Other that feed online violence. From Thiel’s perspective, we may speculate, this means that those who operate those platforms are in the position to harness and manipulate the most powerful and potentially destabilizing forces in human social life – and most remarkably, to derive profits from them. For someone overtly concerned about the threat posed by such forces to those in positions of power, a crucial advantage would seem to lie in the possibility of deflecting violence away from the prominent figures who are the most obvious potential targets of popular ressentiment, and into internecine conflict with other users.

Girard’s mimetic theory can help illuminate what social media does, and why it has become so central to our lives so quickly – yet it can lead to insights at odds with those drawn by Thiel. From Thiel’s perspective, it would seem, mimetic theory provides him and those of his class with an account of how and to what ends power can be exercised through technology. Thiel has made this clear enough: mimetic violence threatens the powerful; it needs to be contained for their – his – protection; as quasi-monarchs, “founders” run the risk of becoming scapegoats; the solution is to use technologies to control violence – this is explicit in the case of Palantir, implicit in the case of Facebook. But there is another way of reading social media through Girard. By revealing that the management of desire confers power, mimetic theory can help us make sense of how platforms administer our desires, and to whose benefit. For Girard, modernity is the prolonged demystification of the basis of power in violence. Unveiling the ways that power operates through social media can continue that process.