Colin Koopman, an associate professor of philosophy and director of new media and culture at the University of Oregon, wrote an opinion piece in the New York Times last month that situated the recent Cambridge Analytica debacle within a larger history of data ethics. Such work is crucial because, as Koopman argues, we are increasingly living with the consequences of unaccountable algorithmic decision making in our politics and the fact that “such threats to democracy are now possible is due in part to the fact that our society lacks an information ethics adequate to its deepening dependence on data.” It shouldn’t be a surprise that we are facing massive, unprecedented privacy problems when we let digital technologies far outpace discussions around ethics or care for data.

For Koopman the answer to our Big Data Problems is a society-spanning change in our relationship to data:

It would also establish cultural expectations, fortified by extensive education in high schools and colleges, requiring us to think about data technologies as we build them, not after they have already profiled, categorized and otherwise informationalized millions of people. Students who will later turn their talents to the great challenges of data science would also be trained to consider the ethical design and use of the technologies they will someday unleash.

Koopman is right, in my estimation, that the response to widespread mishandling of data is an equally broad corrective. Something that is baked into everyone’s socializing, not just professionals or regulators. There’s still something that itches though. Something that doesn’t feel quite right. Let me get at it with a short digression.

One of the first and foremost projects Cyborgology ever undertook was a campaign to stop thinking of what happens online as separate and distinct from a so-called “real world.” Nathan Jurgenson originally called this fallacy “digital dualism” and for the better part of a decade all of us have tried to show the consequences for adopting digital dualism. Most of these arguments involved preserving the dignity of individuals and pointing out the condescending, often ablest and ahistorical precepts that one had to accept in order to agree with digital dualists’ arguments. (I’ve included what I think is a fairly inclusive list of all of our writing on this below.) What was endlessly frustrating was that in doing so, we were often, to put it in my co-editor Jenny Davis’ words, “find [our]selves in the position of technological apologist, enthusiast, or utopian.”

Today I must admit that I’m haunted by how much time was wasted in this debate. That we and many others had to advocate for the reality of digital sociality before we could get to its consequences and now those consequences are here and everyone was caught flat-footed. I don’t mean to over-state my case here. I do not think that eye roll-worthy Atlantic articles are directly responsible for why so many people were ignoring obvious signs that Silicon Valley was building a business model based on mass surveillance for hire. What I would argue though, is that it is easy to go from “Google makes us stupid and Facebook makes us lonely” to “Google and Facebook can do anything to anyone.” Social media has gone from inauthentic sociality to magical political weapons. Neither condition reckons with the digital in a nuanced, thoughtful way. Instead it foregrounds technology as a deterministic force and relegates human decision-making to good intentions gone bad and hapless end users.

This is all the more frustrating, or even anger-inducing when you think about the fact that so many disconnectionists weren’t doing much more than hocking a corporate-friendly self-help regimen that put the onus on individuals to change and left management off the hook. Nowhere in Turkle, Carr, and now Twenge’s work will you find, for example, stories about bosses expecting their young social media interns to use their private accounts or do work outside of normal business hours.

All this makes it feel really easy to blame the victims when it comes to near-future autopsies of Cambridge Analytica. How many times are we going to hear about people not having the right data “diet” or “hygiene” regimen? How often are writers going to take the motivations and intentions of Facebook as immutable and go directly to what individuals can or should do? You can also expect parents to be brow beaten into taking full responsibility for their children’s data too.

Which brings me back to Koopman’s prescription. I’m always reticent to add another thing to teachers’ full course loads and syllabi. In my work on engineering pedagogy I make a point of saying that pedagogical content has to be changed or replaced, not added. And so here I want to focus on the part that Koopman understandably side-steps: the change in cultural expectations. Where is the bulk of that change going to be placed? Who will do that work? Who will be held responsible?

I’m reminded of the Atomic Priesthood, one of several admittedly “out there” ideas from the Human Interference Task Force whose job it was to assure that people 10 to 24 thousand years later would stay away from buried fissile material. It is one of the ultimate communication problems because you cannot reliably assume any shared meaning. Instead of a physical sign or monument, linguist Thomas Sebeok suggested an institution modeled off organized religion. Religions, after all, are probably the oldest and most robust means of projecting complex information into the future.

A Data Priesthood sounds overwrought and a bit, dramatic? But I think the kernel of the idea is sound: the best way we know how to relate complex ethical ideas is to build ritual and myth around core tenets. If we do such a thing, might I suggest we try to involve everybody but keep the majority of critical concern on those that are looking to use data, not the subjects of that data. This Data Reformation, if you will, has to increase scrutiny in proportion to power. If everyone is equally responsible then those that play with and profit off of the data can always hide behind an individualistic moral code that blames the victim for not doing enough to keep their own data secure.

David is on Twitter

Past Works on Digital Dualism:

- https://thesocietypages.org/

cyborgology/2011/02/24/ digital-dualism-versus- augmented-reality/ - https://thesocietypages.org/

cyborgology/2012/10/29/strong- and-mild-digital-dualism/ - https://thesocietypages.org/

cyborgology/2013/03/01/ responding-to-carrs-digital- dualism/ - https://thesocietypages.org/

cyborgology/2013/03/11/dude- ly-digital-dualism-debates/ - https://thesocietypages.org/

cyborgology/2013/03/01/always- already-augmented/ - https://thesocietypages.org/

cyborgology/2013/06/03/ digital-dualist-conservatism/

- https://thesocietypages.org/

cyborgology/2013/10/17/is- digital-dualism-really- digital-ideal-theory/ - https://thesocietypages.org/

cyborgology/2012/05/24/ digital-dualism-and-stories- of-the-real/ - https://thesocietypages.org/

cyborgology/2013/04/03/ difference-without-dualism- part-iii-of-3/ - https://thesocietypages.org/

cyborgology/2016/08/08/make- conversation-great-again/ - http://kernelmag.dailydot.com/

issue-sections/staff- editorials/14961/sherry- turkle-reclaiming- conversation-technology– empathy/ - https://thenewinquiry.com/the-

irl-fetish/

- https://thenewinquiry.com/the-

disconnectionists/

- https://thenewinquiry.com/

fear-of-screens/

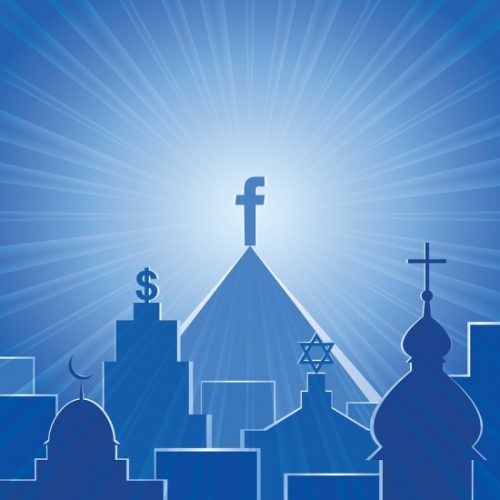

What if Facebook but Too Religious image credit: Universal Life Church Monastery