Facts about all manner of things have made headlines recently as the Trump administration continues to make statements, reports, and policies at odds with things we know to be true. Whether it’s about the size of his inauguration crowd, patently false and fear-mongering inaccuracies about transgender persons in bathrooms, rates of violent crime in the U.S., or anything else, lately it feels like the facts don’t seem to matter. The inaccuracies and misinformation continue despite the earnest attempts of so many to correct each falsehood after it is made. It’s exhausting. But why is it happening?

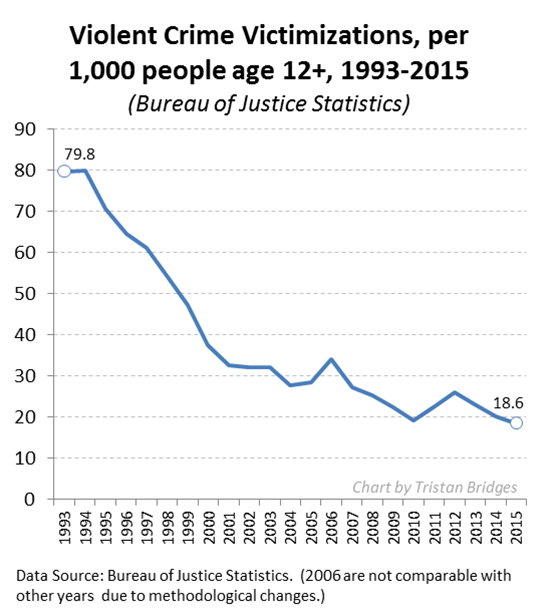

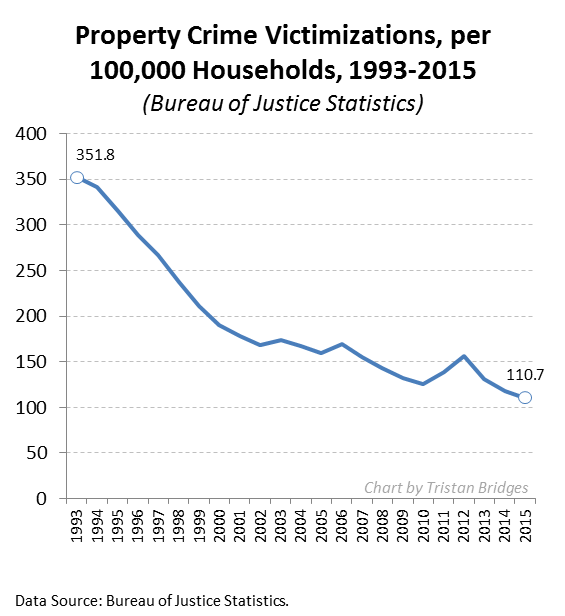

Many of the inaccuracies seem like they ought to be easy enough to challenge as data simply don’t support the statements made. Consider the following charts documenting the violent crime rate and property crime rate in the U.S. over the last quarter century (measured by the Bureau of Justice Statistics). The overall trends are unmistakable: crime in the U.S. has been declining for a quarter of a century.

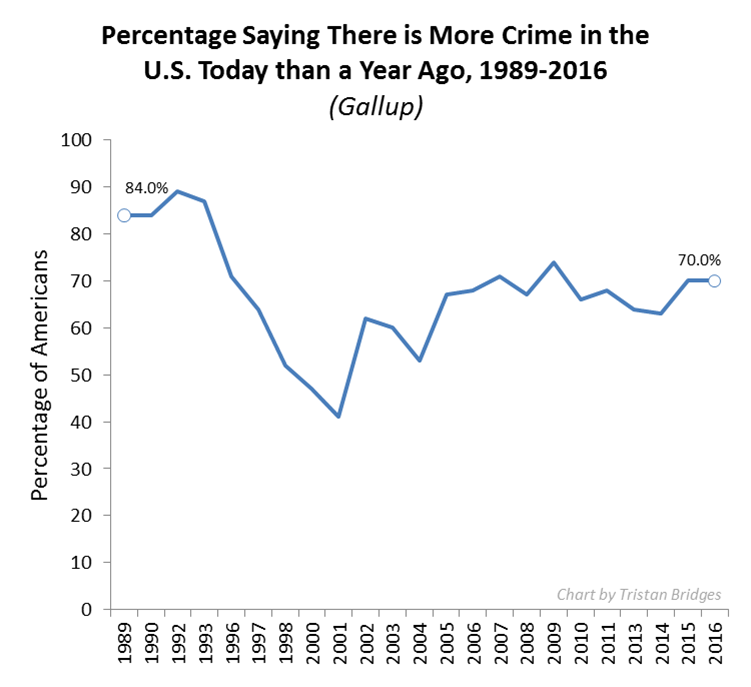

Now compare the crime rate with public perceptions of the crime rate collected by Gallup (below). While the crime rate is going down, the majority of the American public seems to think that crime has been getting worse every year. If crime is going down, why do so many people seem to feel that there is more crime today than there was a year ago? It’s simply not true.

Now compare the crime rate with public perceptions of the crime rate collected by Gallup (below). While the crime rate is going down, the majority of the American public seems to think that crime has been getting worse every year. If crime is going down, why do so many people seem to feel that there is more crime today than there was a year ago? It’s simply not true.

There is more than one reason this is happening. But, one reason I think the alternative facts industry has been so effective has to do with a concept social scientists call the “backfire effect.” As a rule, misinformed people do not change their minds once they have been presented with facts that challenge their beliefs. But, beyond simply not changing their minds when they should, research shows that they are likely to become more attached to their mistaken beliefs. The factual information “backfires.” When people don’t agree with you, research suggests that bringing in facts to support your case might actually make them believe you less. In other words, fighting the ill-informed with facts is like fighting a grease fire with water. It seems like it should work, but it’s actually going to make things worse.

There is more than one reason this is happening. But, one reason I think the alternative facts industry has been so effective has to do with a concept social scientists call the “backfire effect.” As a rule, misinformed people do not change their minds once they have been presented with facts that challenge their beliefs. But, beyond simply not changing their minds when they should, research shows that they are likely to become more attached to their mistaken beliefs. The factual information “backfires.” When people don’t agree with you, research suggests that bringing in facts to support your case might actually make them believe you less. In other words, fighting the ill-informed with facts is like fighting a grease fire with water. It seems like it should work, but it’s actually going to make things worse.

To study this, Brendan Nyhan and Jason Reifler (2010) conducted a series of experiments. They had groups of participants read newspaper articles that included statements from politicians that supported some widespread piece of misinformation. Some of the participants read articles that included corrective information that immediately followed the inaccurate statement from the political figure, while others did not read articles containing corrective information at all.

Afterward, they were asked a series of questions about the article and their personal opinions about the issue. Nyhan and Reifler found that how people responded to the factual corrections in the articles they read varied systematically by how ideologically committed they already were to the beliefs that such facts supported. Among those who believed the popular misinformation in the first place, more information and actual facts challenging those beliefs did not cause a change of opinion—in fact, it often had the effect of strengthening those ideologically grounded beliefs.

It’s a sociological issue we ought to care about a great deal right now. How are we to correct misinformation if the very act of informing some people causes them to redouble their dedication to believing things that are not true?

Tristan Bridges, PhD is a professor at the University of California, Santa Barbara. He is the co-editor of Exploring Masculinities: Identity, Inequality, Inequality, and Change with C.J. Pascoe and studies gender and sexual identity and inequality. You can follow him on Twitter here. Tristan also blogs regularly at Inequality by (Interior) Design.

Comments 70

pamelina — February 27, 2017

There's also the repetition bias: people believe things they've heard several times, even if those things are not true.

"Correcting" a Trump lie, for example, involves repeating it. Seems to me that's counterproductive, to say the least. Headlines that repeat Trump's outrageous claims and then debunk them in the body of the article are a particular problem. Look at the current common-but-false idea that Trump's "just fulfilling his campaign promises" by doing these harmful things. He's not fulfilling his economic and anti-corruption "drain the swamp" promises.

I think that's especially a problem when it comes to climate change and libertarian/crony capitalist trickle-down economic theories.

Brian Pansky — February 27, 2017

There is scientific evidence that the backfire effect might be able to be overwhelmed by more evidence:

http://onlinelibrary.wiley.com/doi/10.1111/j.1467-9221.2010.00772.x/abstract

I'm also in favor of the idea that people can learn to counter the backfire effect within their own minds:

https://www.youtube.com/watch?v=fLG0kkgnRkc

James McRitchie — February 27, 2017

I teach an occasional class on corporate governance, which touches on the problem of wealth distribution. Most students in class think we are the wealthiest country in the world. By average wealth, we ARE wealthy $244,000,.. 5th after Switzerland, Australia, France and Norway.

But if we look a median wealth, it is $44,900 and we are ranked below Spain and Greece. 1/5 the median wealth of Australia, less that 1/2 that of Canada. Myths persist.

Stoph Long — February 28, 2017

The You Are Not So Smart podcast (https://youarenotsosmart.com/ ) just had a great episode about how to overcome the backfire effect. I wrote a web-app inspired by it that I think of as training wheels for applying the process. The web-app and the links to the podcast and related material are here: http://Stophlong.pythonanywhere.com

Igoriginal — February 28, 2017

The greater portion of humanity has ALWAYS been "allergic to facts." This is not a new trend. Look at how the handful of critical-thinking pioneers and scientific trailblazers even during the tail-end of the European Renaissance were treated? Take the likes of Galileo Galilei, for instance. How did the majority respond to him, when the refinement of his "spy glass" into a higher-powered optical tube (the forbear of the modern telescope) revealed strong evidence and confirmed the Copernican theory that the Earth was NOT the center of cosmology (geocentrism), but rather that it was one of a number of planetary bodies which circled a system with the Sun as the center (heliocentrism).

What happened next? Even when Galileo humbly and amicably offered many long-time and distinguished scholars, church clergy, and noblemen to peer through his telescope, and see with their own eyes, how did they respond?

"Well, I'll be damned! The Earth is really NOT the center of god's creation, after all. It's just one of MANY balls of dust out there, and probably not anything particularly spectacular at that, when placed against the backdrop of the entire universe."

Was that how the deeply-entrenched "institutional thinkers" and "university authorities" reacted to this paradigm shift in cosmology that was laid out before them, clear as daylight? Heck, no! They dug in their heels, felt insulted, incensed, and some of the most prominent and decorated figures of society fervently insisted that Galileo's device was "trickery", "sorcery", "deception", and in some cases even denounced as "the work of the devil."

This is just one of MANY examples, demonstrating that the human creature (on the whole) is a heavily-opinionated, typically closed-minded, and overall lazy creature of habit, thus zealously resistant to ANY piece of emerging knowledge that confronts it to have to drastically re-thinking and abridge its world-view.

So, you see, that this "allergy to facts" is nothing new under the sun. In every era, only a HANDFUL of deep-thinking explorers, pioneers, inventors, and tinkerers have been courageous enough to put their own personal feelings and biases aside and instead discipline themselves to place HARD SCIENCE and EVIDENCE-BACKED KNOWLEDGE at the forefront of their priorities in life.

Again, this is NOT an easy thing to do, in ANY century or era. Because, as stated, we are creatures of habitual coziness. We would rather rebel against truth, itself, if it meant that we were required to let go of any of our deeply-cherished traditions and preconceptions, because those are our "security blankets." Familiarity is cozy. It is warm. It is reassuring. But, it is also our greatest DOWNFALL, if newly-emerging evidence to the contrary compels us to let go of those "blankies" and "binkies" but we are stubbornly and petulantly - like that of sniveling and whining adolescents - unwilling to do so.

Indeed, it takes a human being with an EXCEPTIONAL constitution of scientific fortitude, self-checking accountability, and critical-thinking mettle, to embrace newly-emerging evidence-based truth no matter HOW "uncomfortable", or "offensive", or "blasphemous" it may seem. Not too many people are wired like that. And not to many people would even be willing to RE-WIRE themselves, even if given the opportunity and means to do so. And that is why ... the MAJORITY of humanity is "allergic to facts", no matter what age we live in.

The harsh reality is that, as far as we have evolved, we still have far more evolving yet to undergo. That is, if we wish to see a future human civilization in which the MAJORITY is willing to embrace newly-emerging evidence, rather than just a tiny sliver of minority "misfits", "eccentrics", "deviants", "seditionists", "freaks", and "blasphemes."

Andrew Angle — February 28, 2017

That's it? I was hoping the article provided the answer to this dilemma.

http://tinyurl.com/jhjpw6y

Tom Steele — February 28, 2017

You have to give people a way out of their belief that preserves their self image. They need a path to the correct viewpoint that allows them to say, "it wasn't my fault I believed that way," or "I was right when I thought that but now things have changed so my views have changed too."

People don't want to have to admit they were wrong or foolish.

Prudence Tucker — February 28, 2017

I would have shared this article on FB if it didn't start out with a recital of Trump's lies. That would have turned off the folks that shut down when there's mention that Trump lies - in other words, sharing this would "backfire."

Kris — February 28, 2017

There is a tradition in social psychology going back to the 1950s looking at this. "Backfire" happens sometimes, and sometimes it doesn't happen. In itself it doesn't explain much. Looking at the literature on cognitive dissonance and motivated reasoning for understanding would be more useful in my view.

manabozhocoyote — March 1, 2017

I developed a critical thinking / writing class for college juniors. My grad thesis was a comparison of the predictive power of scientific models across disciplines from physical chemistry to sociology, published in Am J Physiology. I was a hippie in the counterculture. My reaction: this treatise doesn't seem balanced. It seems like another defense of the 2017 politically progressive holy writ, item by item. I came away with the impression that the piece isn't "people" who are averse to "facts." It's "those people" who are averse to "our favorite facts." The unemployment rate is a fact, but so is the workforce participation rate. One looks good, the other looks like a multi-decade debacle. The deficit is lower, but the national debt doubled. Both are facts, but what's up with the economy? It would be very encouraging if more of the erudite coverage of the behavior of "dumb people behaving badly" picked one example on *each* side of the political divide, and dismantled some favorite flawed thinking on both the political left and right in the same argument. Would that be so difficult?

Angela L Nobbs — March 1, 2017

The FACT that the journalists have been caught over and over again lying to us because of their own bias has A LOT TO DO WITH IT. I watched CNN everyday and witnessing the MANY LIES during the last 2 years it is ONE of the MANY reasons I voted for Trump! So now I dont know who to believe I find myself flipping the channels between FOX and CNN and trying to decipher whats actually true. ITS EXHAUSTING! The MEDIA HAS/IS SINGLE HANDEDLY DESTROYING OUR DEMOCRACY. I dont have enough time in a day to make enough money to feed my family let alone fact check the people we should be able to trust with FACTS! WE ARE DOOMED! I HAVE NEVER FELT SUCH DESPAIR IN MY LIFE! GOD HELP US ALL!!!

WittoStevie — March 2, 2017

This article would also explain why there are so many who are believers in one god or another, regardless of being shown the staggering number of inconsistencies in whatever texts are preferred.

Kathy Kramer — March 2, 2017

People have to change their approach. Ask them instead to explain why they believe the way that they do. Ask them to explain. And then listen to them. "Correcting" people won't fix this problem of spreading misinformation. It's a process. You're never going to get someone to change their minds when they perceive that you're attacking them.

One thing this article did not mention is that people who tend to hang onto these beliefs already feel that they are in some kind of marginalized group and its "us vs. them". Die-hard Trump supporters feel as if they are in a marginalized group. Anti-vaxxers are the same way. They may not actually be in a marginalized group (like, say, Muslims, for example), but that doesn't matter because how they *perceive* themselves is what matters and the more people point out facts and expect them to instantly change their minds, the deeper they will dig in their heels to hang onto their beliefs.

Janice Rael — March 2, 2017

I thought it was because of God.

ZneroLmiT — March 3, 2017

Some thoughts about the stats on fear.

1. The population increase may mean the number of crimes is UP, even though the rate is lower.

2. Media coverage of crime. The local news to the national networks love churning out crime on camera - surveillance, police monitors & camera phones. Ratings and fear.

Don Benson — March 3, 2017

from a social psychological perspective, we all need to recognize that as adults, most of us choose to belong to social groups that align with our values, providing validation, love and affection, income, etc. Accepting new information/knowledge raises the challenge around continued belonging to those groups, or for that matter the internal stress of accepting that foundational elements of our belief systems may not be valid. Few have the fortitude to risk divorce, alienation, rejection by religious congregations, etc., with no obvious group to then join. Some even are willing to die defending these foundational elements. The primary "transition object" for those willing to change is AA for alcoholics.

bstar53 — March 4, 2017

I serve on our county deer advisory committee CDAC and we are required to consider input from hunters in making management decision. The only problem is that hunter surveys and testimony tend to run as high as 8:1 in believing that the deer herd is dwindling. Only year over year now we have had increasing deer harvests and are neer all historical highs which is consistent with statistical estimates by the DNR. As a result we are unable affect deer numbers in any rational manner and populations are going well beyond the carrying capacity of the land in many areas. Its a dissaster in the making! https://uploads.disquscdn.com/images/a7529b2395515029d2da28a1dc82fed54cc3e7b275e379ae814db5162b74526c.png

Megalith — March 5, 2017

This explains the last 8 years of Obama sycophants.

Shem the Penman — March 7, 2017

When you're wrong, you should own up to it and change your beliefs.

I tell other people that all the time.

KWhit — March 7, 2017

I would really love to write an article about possible answers to this problem- obviously it needs to be as neutral as possible, but going off of what everyone has said in their comments- I want it to encourage ppl to accept new thoughts and ideas that they normally would not, create and establish new schema to go off of when they analyze news articles or news briefs, and maybe encourage others in their social groups to do the same, all in a way that is non-threatening... Is anyone interested in venturing into this territory with me, or does anyone have any ideas of where to start?

Robin Tucker — March 8, 2017

If you are older, and have lived through these decades, and remember not having to lock your door, your car, and not worrying about assault, and then, in the last 10 years, suffered a break in, in your house, while you were sleeping in your bed, been robbed 8 times, you might question the data. After the second time my car was robbed, along with many other neighbors, we learned it is pointless to make a report. I wonder how many others, have given up on reporting crimes? Personal experience, over rides data, at least for me.

disqus_SDuHGwj5tN — March 14, 2017

Have you considered that the real issue is that people do not think in terms like "crimes per 100,000 households"? By human nature, as well as how news stores are picked, people are just hearing about many more crimes than they used to because crime is more interesting and there are more of them,

Anthony Alfidi — August 19, 2018

People change their beliefs when they experience some real-world negative consequence as a result of following their beliefs. Misinformed people need to experience emotional pain or pay a financial price that causes them to change. No one likes being embarrassed, and the easiest way for a typical person to get past embarrassment is to deny it happened - by claiming they believed something different all along.

Cara Ramsey — August 20, 2018

I've long had two personal "rules" that just happen to summarize this entire article.

Cara's Rule: "Homo sapiens is not a rational species; it is a rationalizing species. Understand this and the entire world begins to make more sense."

Cara's Corollary: "People only change their minds about their world view after a significant and traumatic spiritual, emotional, or psychological epiphany."

The problem is that we tell ourselves we are rational. We're not. We're rationalizing. That's an important and critical difference, and the root of what is wrong with our expectations about logic, facts, and truth.

– No puedes convencer a un «escéptico» y eso debería preocuparte — March 12, 2019

[…] personas que piensan de manera opuesta ayuda a tener una sociedad más sana. Atacar las creencias produce el efecto contrario al deseado, insultar no hace que la gente cambie de creencias, y los datos o los argumentos […]

Why do so many people ignore facts? • Skeptical Science — May 3, 2021

[…] Bridges has written an interesting article within Sociological Images. It catches attention because it highlights the rather interesting observation that facts just […]

Tif — October 18, 2022

Funny how you used to have to engage in a real pursuit to find the person you want. Now you only need to install one app on someone's phone... He is now in your hands. You may discover a comprehensive list of these apps at https://celltrackingapps.com/spy-app-for-android-undetectable/ . You can learn about people's secrets and what matters to them most.

Gole1975 — December 27, 2023

Mastered the levels? Geometry Dash World offers endless challenges with Daily Quests, Achievements, and online Leaderboards. Compete with friends, push your limits, and prove you're the ultimate Dasher!

johonson — August 12, 2024

People often resist facts because they challenge deeply held beliefs or self-identity, causing cognitive dissonance. When confronted with information that conflicts with their views, individuals might experience discomfort and defensiveness, leading them to dismiss or rationalize away the facts. Additionally, social and emotional factors, such as group identity and personal biases, can further reinforce resistance, making it easier to cling to familiar, though inaccurate, narratives rather than confront uncomfortable truths. This phenomenon can be described as sim reg dito where the familiar but erroneous perspectives are maintained despite evidence to the contrary.

Roy — May 17, 2025

People often resist facts when they challenge deeply held beliefs, emotions, or identities. Cognitive biases and echo chambers further reinforce misinformation. Even in light of headlines like jannik sinner copine, many choose narrative over truth when it feels more comfortable or familiar.