As a follow up to my previous post about the Center for Humane Technology, I want to examine more of their mission [for us] to de-addict from the attention economy. In this post I write about ‘time well spent’ through the lens of Sarah Sharma’s work on critical temporalities; and share an anecdote from my (ongoing) fieldwork at a recent AI, Technology and Ethics conference.

Time Well Spent is an approach that de-emphasizes a life rewarded by likes, shares and follower counts. ‘Time well spent’ is about “resisting the hijacking of our minds by technology”. It is about not allowing the cunning of social media UX to lead us to believe that the News Feed or TimeLine are actually real life. We are supposed to be participants, not prey; users not abusers; masterful and not entangled. The sharing, pouting, snarking, loving, hating and sexting we do online, and at scale, is damaging personal well-being and the pillars of society, and must be diverted to something else, the Center claims.

As I have argued before in relation to the addiction metaphor the Center uses, ‘time well spent’ implies the need for individual disconnection from the attention economy. It is about recovering time that is monetized as attention. This is a notion of time that belongs to the self, un-tethered, as if it were not enmeshed in relationships with others and in existing social structures.

“What Foucault is to Power, Sarah Sharma is to Time”: Time like power is everywhere, it is differential, flows, changes shape, organizes society and relations between bodies. Sharma’s In the Meantime: Temporality and Cultural Politics explores how relationships to time organize and perpetuate inequalities in society.

Her ethnographic work develops a ‘power chronography’ approach, a level-up riff on Doreen Massey’s ‘power geography’. ‘Power-chronographies’ register the bio-political dimensions of how Time and Place are calibrated for us by the outer structures of our lives, which work inward to eventually shape who we (think) are. Sharma spends time with taxi drivers, slow food makers, frequent flyers and office workers and finds that time, for many people, is not theirs to manipulate, hoard, speed up or slow down. She writes:

“Individual experiences of time depend upon where people are positioned within a larger economy of temporal worth. The temporal subject’s day includes technologies of the self that are cultivated through synchronizing to the time of others and also having others synchronize to them. In this way the meaning of one’s time is in large part structured and controlled by both the institutional arrangements inhabited and the time of others—other temporalities.”

Harris’ approach constructs social media time as ‘me-time’, an individualized emphasis on being social. However, the digital ecosystem generally, and some social media platforms, host both public and intimate economies of care and work that make getting off near impossible. Migrants maintain family relationships across distance; entrepreneurs set up and manage businesses; millions are employed by digital apps and platforms; activists amplify their causes; marginalized people find community. Not spending time on these platforms is not a choice for many people.

Recent initiatives bring critical labor politics perspectives to the monetization of our time-as-work and work-time on these platforms, such as Platform Co-operativism and the scholars and activists at this upcoming event Log-Out: Worker Resistance Within and Against the Platform Economy. These offer ways to think about the Center’s concerns with time-as-individualized critically, through labor organizing and rights, and the history of scholarship on Taylorism, management science and the capitalist structuring of work-as-time, broadly.

The questionable gift of social media apps and platforms is that they would make us all more efficient – personally and professionally – because our deep connections across a limited number of platforms would make things faster, and “friction-less”. It is an oft-repeated observation that when email was first available it was something of a reprieve from having to respond immediately, as you might to a phone call. Technology made a promise that you could manipulate, expand and make time; but this delay has become perverted, and UX became a sort of handmaid to this effort. So the notification lurks there saying “oh you don’t have to look at this now, you just need to know something has arrived for you and you can look at it whenever you have the time.” In Harris’ list of things to do to minimize social media addiction, turning off notifications is one.

When Harris talks about spending our time well, and about well-being, I claim he wants us to re-calibrate time in a continuation of a familiar neoliberal agenda. You are responsible for how you spend your time. Find a number that values what you do with your time [which is a number that is supposed to reflect what you do, not who you are]. Slow down; Sharma writes about slow food, wellness, yoga in the office, listening to your body. But also: maximize your slowed-down time.

Yet, we’re told things are always speeding up thanks to technology: High frequency trading algorithms, machine learning and automation all promise to compress time. Sharma says that it isn’t so much about speeding up, or the value on slowing down, as it is about re-calibration:

“What most populations encounter is not the fast pace of life but the structural demand that they must recalibrate in order to fit into the temporal expectations demanded by various institutions, social relationships, and labor arrangements. To recalibrate is to learn how to deal with time, be on top of one’s time, to learn when to be fast and when to be slow.” (p133)

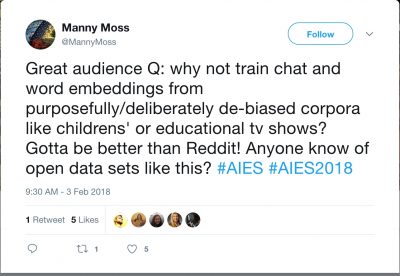

The other angle to temporal politics is its differential value depending on who is doing the valuing; and this came home to me through an interaction at an AI and Ethics academic conference in the United States a few weeks ago. At the end of one panel session on ‘ethical issues and models’ that included two papers on natural language processing (NLP), chatbots and digital assistants, I asked a question that I wanted a genuine technical response to:

“given that we know that social media speech is a convenient, never-ending source of training data, but a cesspit at the same time, has anyone looked elsewhere for training data for an AI assistant? It may sound naive but why can’t we have even some small scale experiments comparing what would happen if an entirely different training data set, say from the corpus of children’s literature, television programming, or young adult fiction – these exist in every language around the world – and which comes with some thought and controls – be used to train machine learning algorithms for speech?”

No one on the panel responded except to say they hadn’t really considered it. Though, someone came up to me later and introduced themselves as working at a national standards-setting organization. One of the many things the organization does is to standardize and verify training data sets and how they are to be used. They were both intrigued and concerned by my question: intrigued because they saw the problems in using social media data, but concerned because children’s literature would not work as a training data set. At all. Nor teen fiction. I tried to emphasize the principle rather than the detail – are there other training datasets that might shift the standards of speech – ours and machine speech – and what would it take to employ them?

Eventually, they said, it comes down to this: Social media data is already there, it is faster and more reliable. It would take a lot more time and effort to develop new standards through different kinds of speech. Why is that a problem, I asked? Isn’t there time to innovate in new directions while carrying on with the same rubbish data? I mean, Twitter isn’t going anywhere.

They responded, and I paraphrase here: “If we have to start adopting new kinds of training data then it is just going to put a lot of pressure on the ecosystem and the supply chain of a lot of services based on NLP. These programmers and data scientists need to be on it, pushing out products more quickly if they want to maintain their edge. Social media is free, relatively, it’s just there. It would take a lot of effort and time to think about changing the model. ”

Time – theirs – is an economically valuable resource; and they don’t want to spend it. Our time as users is also valuable but one to be extracted, monetized and manipulated. Finding ways to get users to spend more time on a website and fall deeper into its seductions is exactly what Harris did at Google. If there is sincerity in making time ‘well spent’, I’d argue it has to become a consideration through the process of development rather than be something managed at the output end of a highly asymmetrical power-economy.

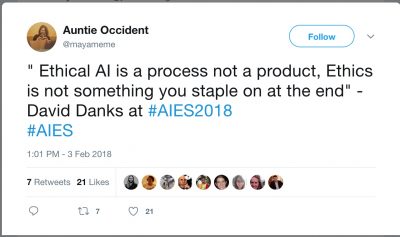

I believe this to be a question of ethics too, and of the structural dimensions of how technology industries build technology. I’ve argued that ‘ethics in AI/ML’ is being treated as an outcome, a rule-based, computational, decision-making process to be completed by machine learning. I believe many papers presented at this conference were in this mold. Instead, what if ethics were considered to be a series of ongoing, complex negotiations of values between humans and non humans? And as integral to the design process? A few others at the conference were on the same page. I view the the Center’s emphasis on de-addiction at the user-end as part of the ethics-tacked-on-at-the-end approach, and the ethics-as-output approach.

It is much harder to think about what it means to design and build technologies ethically, than to make a technology artifact that makes decisions that are deemed ethical because they were programmed according to rules based on specific approaches to ethics. We need more of the former and less of the latter. Perhaps if time were spent well through the process of building digital technologies, society and democracy might be better served. The Center’s efforts, and that of other efforts – like Listen Up from RagTag – are very welcome in this direction.

Maya Indira Ganesh is working on a PhD about the testing and standardization of machine intelligence. She enjoys working with scientists and engineers on questions of ethics and accountability in autonomous systems. She can be reached on Twitter @mayameme.

Comments 1

Roxana Marachi — February 18, 2018

Excellent, thought-provoking piece. I have re-shared it on a collection of articles related to Educational Psychology and Technology (with author attribution and links back to this site). Thank you http://bit.ly/edpsychtech