I dream of a Digital India where access to information knows no barriers – Narendra Modi, Prime Minister of India

The value of a man was reduced to his immediate identity and nearest possibility. To a vote. To a number. To a thing. Never was a man treated as a mind. As a glorious thing made up of stardust. – From the suicide note of Rohith Vemula 1989 – 2016.

A speculative dystopia in which a person’s name, biometrics or social media profile determine their lot is not so speculative after all. China’s social credit scoring system assesses creditworthiness on the basis of social graphs. Cash disbursements to Syrian refugees are made through the verification of iris scans to eliminate identity fraud. A recent data audit of the World Food Program has revealed significant lapses in how personal data is being managed; this becomes concerning in Myanmar (one of the places where the WFP works) where religious identity is at the heart of the ongoing genocide.

In this essay I write about how two technology applications in India – ‘fintech’ and Aadhaar – are being implemented to verify and ‘fix’ identity against the backdrop of contestations of identity, and religious fascism and caste-based violence in the country. I don’t intend to compare the two technologies directly; however, they exist within closely connected technical infrastructure ecosystems. I’m interested in how both socio-technical systems operate with respect to identity.

Recently in Aadhaar

Aadhaar is the unique 12 digit number associated with biometric data that the Indian government’s national ID project (UIDAI) assigns to citizens. It is not mandatory to register for an Aadhaar number, however. Financial technologies, or fintech, are (primarily) mobile phone based apps for financing, loans, credit, retail payments, money transfers, asset management and other financial services. Fintech applications circumvent the high cost of banking services, the unavailability of ‘bricks-and-mortar’ banks, and the complex procedures required to open a bank account.

Fintech and Aadhaar are both supposed to be, loosely, technologies to manage complex and ‘wicked’ problems —poverty, corruption, and social exclusion. Both shape practices of identity verification and management through trails of financial transactions and biometric data. Aadhaar is expected to make bureaucratic processes smoother, like opening bank accounts, applying for a passport, receiving a pension, or buying a SIM card, through a speedy identity verification process. The absence of identity documents and fake documents, are common in India.

On January 4, 2018, the Punjab-based newspaper, The Tribune broke a story that for Rs.500 (US$ 8) personal data associated with Aadhaar numbers could be accessed via a “racket” run on a WhatsApp group. It gets worse: “What is more, The Tribune team paid another Rs 300, for which the agent provided “software” that could facilitate the printing of the Aadhaar card after entering the Aadhaar number of any individual.”

Nine years after Aadhaar first entered Indian citizens’ awareness, we are contending with it as much more than a biometrics database and national ID project. It is also a public-private partnership and a government project, critical public infrastructure, a complex socio-technical system, a biometric database, a contested legal subject, and now, a flagrant security risk.

However, Indian civil society has been thinking about Aadhaar in all these terms; we have been researching, analysing and thinking about what it means to develop a biometric database for 1.25 billion people, how this data will be stored, how it will be integrated into other public infrastructure and systems, and what all of these will mean for the country’s most marginalised and disadvantaged citizens.

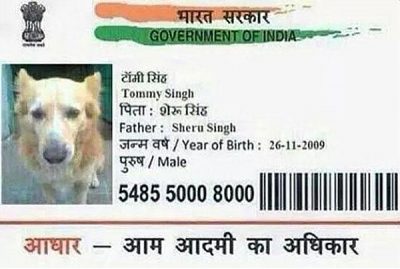

In critiquing the project, researchers, technologists, journalists, lawyers and activists have been trying to illustrate that this is more than just a matter of iris scanners. One hilarious story stands out: in 2015, a man (who, it turns out, worked in an Aadhaar enrollment centre) applied for an Aadhaar card for his dog. Reverse engineering this, a biometrics-based unique identity project is not just about the biometrics, but about all the different social, technical, cultural and legal systems that biometrics-capturing technology is embedded in.

More sobering are accounts of ‘Aadhaar-deaths’. An old woman in a rural area was denied her food subsidies because her Aadhaar number wasn’t found on the system; she eventually starved to death. A 13 year old girl died of starvation because her family’s Aadhaar card had not been properly ‘seeded’ to the Aadhaar database and thus was not on the grid. Three older men, brothers, also died because they could not produce Aadhaar cards to claim their food subsidies. Identity stands out in the stories of these starvation deaths: the victims were old, widowed and young, and Dalit. Usha Ramanathan, a lawyer and expert on Aadhaar, has been calling out these risks for some time now; writing in the Indian Express, she notes that “illegality and shrinking spaces for liberty…have become the defining character of the project,” and she details a number of violations that affect the most disadvantaged in society.

These concerns have often been ignored by the state but they cannot ignore The Tribune investigation. After initially denying the story, the UIDAI, the government agency that manages the Aadhaar project, filed a case against the Tribune journalist for ‘misreporting’, which is deeply problematic at many levels not least for press freedom. However, faced with public pressure, the agency has begun to adopt practical suggestions to improve security of Aadhaar transactions.

Fintech and verification

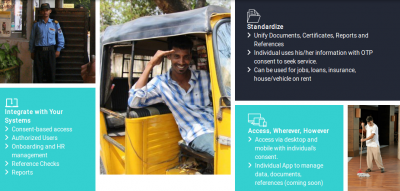

Fintech initiatives and biometrics claim to ‘reduce friction’ and increase trust in people (but really in big data infrastructures). OnGrid and IndiaStack are two such verification platforms being used in fintech applications. Like E-KYC, or ‘electronic-Know Your Customer’, a modality popular with banks to verify customer identities, OnGrid and IndiaStack verify an individual’s identity documents and offer ‘verification as a service’ to clients like fintech providers, and to facilitate the use of Aadhaar. Quick identity verification makes financial and other commercial transactions ‘frictionless’.

The OnGrid homepage has images of people who provide manual and domestic services – bell boys, domestic helpers, rickshaw drivers, personal drivers. The class dimension is impossible to ignore: people in these roles are less likely to be trustworthy, or have ‘uncertain’ identities, and OnGrid is a way to verify them, the images seem to say.

Lenddo is a credit rating scheme that uses a machine learning algorithm that needs only three minutes to process an individual applicant’s smart phone browsing history, GPS history, and social media to generate “insights” predictive of the applicant’s creditworthiness. However, as an employee of the company clarifies in an interview, a single data point, like a single Facebook post, does not determine creditworthiness:

“We gather at least 17,000 data points for every application…we look at behavioural patterns not a single incident. We look at if you shop online and how much not at all the things you buy. We need to look at a to of data points to create a pattern and this is based on an algorithmic model we have developed ..You also need to have a pattern of three years to determine something, not a random one-off thing.” (Interview with ‘KC’ conducted via VOIP, Oct 18, 2017)

The interviewee goes on to tell me that India is one of the most profitable markets because of the lax legal and regulatory environment. (Her tone is hushed, almost reverential, when talking about the Indian market.) They would love to get into Europe but data protection guidelines are too strong, she says.

At the start of banking and financial institutions in the industrial age, creditworthiness was based on an individual’s social graph and personal networks. This evolved into creditworthiness on the basis of actual financial indicators, such as income, assets and job security. Yet with fintech applications like Lenddo, as in the Chinese case, we see a return to an earlier metric of creditworthiness, albeit without an individual’s full knowledge of how things work.

In July 2017, the Economic Times reported that two new fintech startups working in India, EarlySalary and ZestMoney, were using customers’ online activity to track and verify them. The CEO of EarlySalary narrated a story in which the company rejected an online loan application made by a young woman who was perfectly eligible for it. They were able to ascertain that she was actually taking the loan out for her live-in boyfriend, who was unemployed. He had had applied for a loan himself and had been rejected. Here is how they figured it out:

“The startup’s machine learning algorithm used GPS and social media data —both of which the duo had given permissions for while downloading the app —to make the connection that they were in a relationship. The final nail in the coffin: the lady in question was transferring money every month to the boyfriend which showed up in the bank statements they had submitted for the loans”

The story goes on to quote the CEO of ePayLater who says that their app’s machine learning algorithm uses anywhere from 800-5000 data points to assess a customer’s willingness and ability to repay a loan: from keyboard typing speeds (“if there is a lot of variation in your usual behavior, the machine will raise an alert”) to Facebook (“Accessing her social media, we learnt they were dating”) to LinkedIn (“To understand if one is working or not we usually check his LinkedIn profile”).

Identity and Violence

But what does it mean to verify or fix identity against a backdrop of ferocious religious violence, ‘beef lynchings’, and of ‘love jihad’ on top of endemic caste discrimination and violence against Muslims? The porosity of the Aadhaar database, the Aadhaar starvation deaths, are more than just technical lapses. These are serious breakdowns of complex socio-technical systems, and are not likely to inspire confidence in people who are marginalised. In India it is not uncommon for people to size each other upon meeting by asking ‘where are you from?’, which is shorthand for many things including ‘where are you on the social hierarchy in relation to me?’

In the early days of the Indian government’s 2016 ‘demonetisation’ drive to manage corruption and introduce negative interest rates, a friend tells me that his elderly Muslim parents received messages on WhatsApp groups saying that this was the Indian government’s way of harassing Muslims by taking away their money (Muslims are some of the poorest people in India) and reducing them to penury. The WhatsApp group messages urged older people to quickly take their money out of banks. Such heartbreaking stories of misinformation and disinformation are part of what it means to apply predictive algorithms and biometrics in already-stratified, violent and hierarchical societies.

The challenge to India’s brutal caste system is not a new phenomenon but it has picked up steam recently, thanks possibly to increased media attention to caste violence, and student activism, among other factors. Caste in the sense of jaat or jaati, is an enduring, fundamental variable in India’s byzantine system of social stratification. Caste is particularly violent because it is both fixed and yet an entirely social construct; a person born into a particular jaati can do nothing to escape it. It takes on the manner of something biologically determined and passed on from parent to child with no option for conversion, mixing or ‘lightening’. Endogamy, or marriage within jaati, is the expected norm. Inter-caste marriages happen but are met with everything from social snubs to criminal violence; the children resulting from this union take on their father’s caste. There is no quadroon version of jaati. There is ‘passing’, however: it is not uncommon for Dalit Indians to change their names in order to pass as a member of a more favourable caste to access housing, jobs, social inclusion, and to avoid violence.

What it means to manage and hide identity in terms of surnames, addresses, and social graphs as known by machines, is chilling. At the present moment this does not seem particularly far-fetched considering the state’s encouragement of its religious fundamentalist fascist base.

Epilogue / Prologue

On August 24, 2017, the Supreme Court of India passed a historic judgment upholding the constitutional right to privacy and drew clear lines between personal identity, privacy, dignity and the health of a democracy. It draws a link between privacy and personal identity, including sexual and reproductive identities and choices; and writes extensively about ‘informational privacy’:

“Knowledge about a person gives a power over that person. The personal data collected is capable of effecting representations, influencing decision making processes and shaping behaviour. It can be used as a tool to exercise control over us like the ‘big brother’ State exercised. This can have a stultifying effect on the expression of dissent and difference of opinion, which no democracy can afford.”

At the time of writing, the Indian government is on the fourth day of a hearing in the Supreme Court defending Aadhaar in light of the Privacy ruling; and in light of the Tribune investgation, and the absence of clear data protection guidelines in the country, 2018 is going to be an interesting year for Aadhaar – and hopefully a better one for Indian citizens – and the future of the biometrics project in India.

Caste, biometrics and predictive algorithms are forms of power masquerading as knowledge about people, and social media-derived social graphs serve as proxies for trust in them. There is no tidy stack of big data infrastructures that can be plugged in to eliminate violence, poverty and corruption in India (or anywhere); but fintech and Aadhaar are the latest in a long history of schemes and programs attempting to do just that. There are untidy, imperfect interconnections between digital technology, privacy and the contestations and manipulations of identity currently underway in India. In addressing these places of imperfection, we must deconstruct the ways in which big and biometric data become a tool to perpetuate long-standing and deep-rooted forms of discrimination.

In the summer of 2017 I wrote an essay about financial technologies and Aadhaar, but for various reasons the essay was not published at the time. The present essay draws from the original and reflects changes in the landscape over the past five months since the Supreme Court ruling. The original essay was supported through work at Tactical Technology Collective. Some of these ideas were first developed for a panel at Transmediale in Berlin in January 2017.

Maya lives in Germany and on Twitter as @mayameme

Comments 1

Alka Ganesh — January 26, 2018

We who live in India are now reduced to PAN Card, Aadhar Card, Voter ID, and to be doubly sure the privileged like us closely guard the drivers license and passport. All these are our identity and keys to open doors which are your legitimate right to enter.