On September 2, the photograph of 3-year-old Aylan Kurdi lying face down on a Turkish beach circulated internationally on social media. Amid discussions of whether or not it was ethical to post, tweet, and share such a heart-wrenching image, The New York Times rightly noted that the powerful image has spurred international public attention to a crisis that has been ongoing for years. As Anne Barnard and Karam Shoumali said:

“Once again, it is not the sheer size of the catastrophe—millions upon millions forced by war and desperation to leave their homes—but a single tragedy that has clarified the moment.”

The conflict in Syria has lasted almost five years now. With more than half the population forced to leave, the United Nations reported that the Syrian conflict now represents the largest displacement crisis in the world. Over 12 million people require some form of humanitarian assistance. And almost half of those displaced are children. Like Mohammed Bouazizi’s self-immolation that sparked Arab Spring (and, coincidentally, the current civil war in Syria), the image of Aylan, too, has the capacity to change the world. Bouazizi was not the first person to set himself alight in protest, just as Aylan was not the first child to wash ashore on Mediterranean beaches.

Indeed, those who have been following the refugee crisis over the past four years have viewed countless tragic images. But there is — for the moment, at least — something significant about this particular photograph. It could be because the image is deceptively peaceful, failing to reflect the violence that pushed his parents to flee or the family’s terrifying experience at sea that ultimately led to the deaths of Aylan, his brother Galip, and their mother Rehan. It may also be because of his clothing: red shirt, blue shorts, and Velcro sneakers. He could be anyone’s son, brother, nephew.

Aylan’s image has galvanized attention from around the world, especially the West. The public’s concern and outrage after the photo circulated on social media has already had a significant impact on the refugee crisis. This single tragedy has become the symbol of the refugee crisis in the Middle East. The image and subsequent public outcry has led to an increase in charitable donations, impacted election campaigns, and prompted the public to demand more of their governments, resulting in Western nations around the world pledging to increase the number of refugees they will take.

Although it is unfortunate that it takes something as tragic as the body of a boy lying alone on a beach to solidify public resolve, it is also an important reminder that we are, as Goffman suggested, “dangerous giants.” We have the capacity to enact change on a level that is difficult to imagine as an individual.

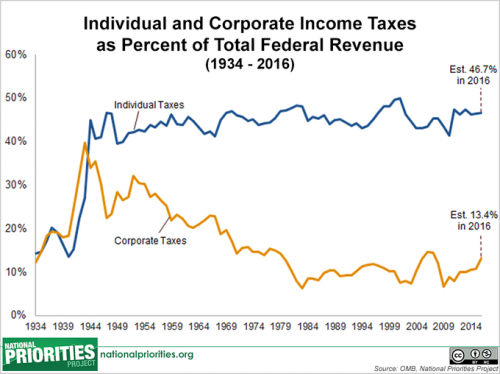

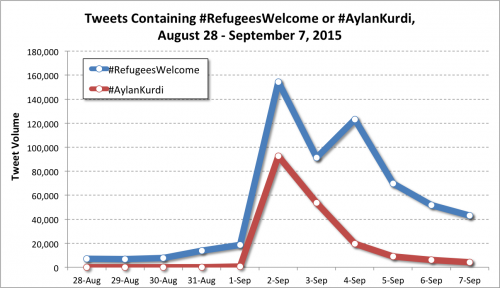

The graph below shows Twitter activity both before and after the photo of Aylan went viral. Tweet volume about Syria has more than doubled since the world was shown the image. Tweets welcoming refugees from the region showed and even larger increase. And, although tweets with Aylan’s name appear to have been short-lived, perhaps the international attention they produced can be harnessed as people are forced to learn more about why this tragedy occurred and pledge support.

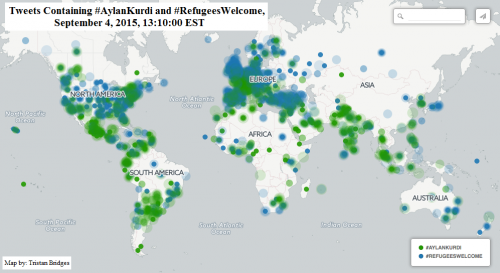

When we georeference and map tweets containing the hashtags #RefugeesWelcome and #AylanKurdi, we can also see how this unfolded around the world. Twitter is a crude measure of impact. Yet, just as Barnard and Shoumali suggested, a single tragedy amidst a conflict that has led to the deaths of so many seems to have helped to capture the attention of the world. See the snapshot of Twitter activity around the world using the hashtags #AylanKurdi (green) and #RefugeesWelcome (blue) two days after the photograph went viral (below):

So, can an image of a child change the world? Typically, no. But, a powerful image under the right conditions might have an impact no one could have predicted.

Originally posted at Feminist Reflections.

Tara Leigh Tober and Tristan Bridges are sociologists at the College at Brockport (SUNY). You can follow them on at @tristanbphd and @tobertara.