Cyborgology readers, I need your help. I’ve put the post I was writing for today on hold because I’m short a key piece of terminology, and I’m hoping one of you can either a) point me to a good preexisting term, or b) help me to assemble a term that’s a bit more graceful than the ones I can come up with on my own.

The phenomenon I’m trying to describe is one that I’ve encountered a number of times over the past week, and is a theme I identify fairly often in conversations about newer technologies. I describe it below, first generally and then with a couple recent examples.

To set up my description, remember that ‘the physical’ and ‘the digital’ aren’t separate worlds, and that human behavior ‘online’ has a whole lot in common with human behavior ‘offline.’ Note that I’m specifically avoiding saying that behavior online “mirrors” behavior offline here, because that would imply that online and offline expressions of a given behavior are actually two separate behaviors that closely resemble each other; after all, your reflection closely resembles you, but you and your reflection are not the same thing. I’m starting from the assumption that the various online and offline expressions of a behavior (sharing, bullying, etc) are, at the most fundamental level, the same behavior.

Now that we’ve established that, here’s what I’ve observed: a new technology (or a change to an existing technology) enters the scene, and makes more explicitly visible to us some facet or aspect of human social behavior that a) is usually more latent, subtle, or obscured, and that b) makes us feel anxious, uncomfortable, or even repulsed. The behavioral facet we see on display through the new technology isn’t new, it’s just newly visible (or more visible than it was before); it is also not unique to behavior connected to the new technology, even if the affordances of that technology seem to encourage the specific behavior.

When we try to identify and explain our unpleasant feelings, however, sometimes we don’t correctly identify the source of our discomfort as having been forced to confront a distasteful aspect of how our society works that we would rather have kept ignoring. Instead, we blame the new technology—and we blame it not for being a too-effective lens, but rather for “causing” or even “being” the unpleasant aspect of our society itself.

To help illustrate what I’m talking about, here’s a couple recent examples:

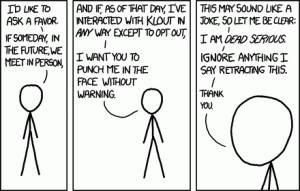

1) Klout. We love to hate Klout—or at least, I love to hate Klout; as I’m so fond of repeating, Klout “encourage[s] nothing good”—but let’s face it: “social ranking” doesn’t happen only through Klout. Social ranking existed well before Klout (else, why would anyone have bothered to built Klout? The concept would have made no sense), and it had the power to affect who got jobs and preferential treatment before Klout, too. At the most basic level, Klout isn’t creating any new kinds of human behavior; Klout is just making more explicit and blatantly visible something that’s usually easier to hide or ignore. Does that something (social ranking) make us uncomfortable? Yes it does. And is Klout trying to smack a glossy veneer of Science™ onto social ranking? Yes it is (and that’s what really gets me). But in the end, what we’re doing when we hate Klout is resenting it for forcing us to acknowledge something about our society we’d rather ignore. Pretending that Klout is the cause rather than a symptom is just an attempt to re-obscure what’s too disquieting to have in direct view.

2) Facebook’s recent announcement that it will give users the option of paying to promote their posts on the site, so that more of their ‘friends’ see them. There’s a lot tied up in here to dislike (where’s that “dislike” button when you need it?): the idea that money talks, the idea that we have to buy our friends’ attention (we don’t like to think about friendship and money at the same time), the idea that our care and attention—two important aspects of friendship itself—can be purchased, the idea that people should act like corporations (first corporations get to be people, now this?), and the idea that your personal identity has become a brand identity, to name just a few. But again, promoted status updates are a symptom, not the cause; Facebook wouldn’t be rolling out this option if it didn’t think people would actually use it. We can defriend people who promote status updates all we want, but again, this is just an effort to re-obscure; the problem (problems, really) isn’t the promoted updates themselves.

There are other recent examples related to self-tracking and decision-making apps that I’ll be talking about next week, but for now, I’m looking for some new words:

What do we call what it is that we’re really reacting to when we lash out against technologies like Klout and promoted status updates, which is the fact that something threatening, distasteful, and inescapable is now too visible, too explicit, too overt, too blatant for comfort, is displayed in too-stark relief, has been distilled down to a too-bitter concentrate that’s near impossible to swallow? “Explicitization,” “salientization,” and “deobscuration” start to get at the point, but I have to admit: they’re pretty awful as words.

Similarly, what do we call our reactions, our misplaced resentment? What do we call the attempt to re-obscure that which we don’t want to confront by trying to turn the occasion for visibility into the phenomenon itself, by treating the setting of a behavior’s display as its root cause?

Please leave your ideas and suggestions in the comments section; I’m looking forward to your responses!

Blame the phone image from http://www.redorbit.com/news/technology/1112522497/when-carriers-fight-we%E2%80%99re-the-ones-to-blame/

xkcd Klout comic from http://www.xkcd.com/1057/

Strange creature can’t look photo from http://1funny.com/cant-look/

Comments 30

‘Salientification, Explicitization, Deobscuration’: Looking for Less-Awkward Words | Flash Politics & Society News | Scoop.it — October 6, 2012

[...] All your blame is belong to device? Cyborgology readers, I need your help. [...]

Donald W. Taylor II — October 6, 2012

I'm sure if you dig through Heidegger, you'll find something built up from "revealing". Technik-entbergen-angst. But that won't get you out of ugly terminology.

David Z. — October 6, 2012

I would add "quantification" is a useful term for this sort of discussion

Donald W. Taylor II — October 6, 2012

But seriously, the Wikipedia page on Heidegger's The Question Concerning Technology might at least offer a few new roots or frames from which you might derive a useful and elegant term.

http://en.wikipedia.org/wiki/The_Question_Concerning_Technology

nathanjurgenson — October 6, 2012

"Klout isn’t creating any new kinds of human behavior" -- in the twitterdrome this line has caused some confusion...though i suspect the issue is merely semantic. can you clear this line up a bit? i suspect you mean that social ranking is not new. but surely Klout's flavor of social ranking *is* new, and that will lead to new behaviors. the most trivial example would be that checking a Klout score is a new behavior that is the result of Klout. right?

nathanjurgenson — October 6, 2012

also, the reason i don't like “Explicitization,” “salientization,” and “deobscuration” isn't that they are ugly; i do not think they capture enough of the full range of what is happening (no term is *perfect* in this respect, but i think these terms miss something fundamentally critical, which you do bring up in this post).

Baudrillard talked about Disneyland's simulations (e.g., Main St USA) as obscuring the fact that *the rest of America* is a simulation.* i think there is a parallel, and it isn't just about making things more explicit, more salient and less obscure; just the opposite: Klout can also make non-Klout social ranking more obscure. indeed, you already start to make this point in the Klout paragraph above: "Pretending that Klout is the cause rather than a symptom is just an attempt to re-obscure what’s too disquieting to have in direct view."

yes, Facebook can make identity performance more explicit; but Facebook is often deployed as performance in order to (wrongly) claim that offline life is not performance. constructing Facebook as a performance is often about simultaneously co-constructing not-facebook as real and authentic. in this sense, Facebook-as-performance can obscure and hide offline performativity.

thus, i'd be very weary of any term that speaks of this trend as about revealing or demystifying when, fundamentally, it also about obscuring. why focus on what is revealed and ignore what is simultaneously concealed? (remembering i come from the assumption that all revealing involves some concealment and vice versa). i don't think we need two terms here, but one that gets at both the revealing and the concealing.

-------

*"a simulation of the third order: Disneyland exists in order to hide that it is the "real" country, all of "real" America that is Disneyland (a bit like prisons are there to hide that it is the social in its entirety, in its banal omnipresence, that is carceral). Disneyland is presented as imaginary in order to make us believe that the rest is real, whereas all of Los Angeles and the America that surrounds it are no longer real, but belong to the hyperreal order and to the order of simulation. It is no longer a question of a false representation of reality (ideology) but of concealing the fact that the real is no longer real, and thus of saving the reality principle." from: http://www9.georgetown.edu/faculty/irvinem/theory/baudrillard-simulacra_and_simulation.pdf [pdf]

and because it's hilarious that a blog comment has a footnote, let's continue to nerd out about the fact that this whole point of constructing one thing to obscure something else has a long history. i'd love to hear more examples of this. my favorite is Foucault discussing the construction of mental illness as really being more about the simultaneous construction of "normal." and, in the quote above, Baudrillard is also citing Foucault with the point that prisons often obscure the fact that it is the rest of society that is carceral.

muscadine — October 6, 2012

seems like webers concepts of calculability and demystification can do a lot of work here.

Donald W. Taylor II — October 6, 2012

I don't think you should throw away the the mirror so quickly – at least as a model of what you are looking for. I think what you are describing is a set of phenomena where in one configuration they are invisible or misperceived (e.g. most of my own face is beyond my field of vision; when I look down at my body, I get an unusual perspective on it – my stomach is really big, my feet are really small, like a Venus of Willendorf). But when deracinated or put into interaction with a third thing, becomes visible or differently perceived. The on-line doesn't "mirror", but it does "hold up a mirror to", insofar as what a mirror does is create a second perspective outside of your head. This is still me castigating, but I am hoping this maybe gives you a few more ideas than me.

Victor — October 6, 2012

For some (I'd say most) people, social ranking is an acutely felt aspect of everyday life. It's just common knowledge that our life prospects are foreshortened by a variety of social ranking systems. Therefore it's not a shock when someone brings it up. It's only shocking for those who've had a hand in obscuring it (the 'we' of articles like this)- whose power is so great, whose skill set contributes to the maintenance and reproduction of techno-social ranking and thus has material effects in the world- and in this case is further consolidated by, say, maintaining a value-neutral stance towards the technologies that intensify it. So that's my suggestion: intensification. Social ranking has always existed, yes, but struggles have been waged to counteract its effects. Klout contributes nothing to these struggles. In that case, you might call your 'misplaced resentment' and effect of class bias.

atomic geography — October 6, 2012

The context of these behaviors is what's new so it seems to me just a case of context collapse, or maybe in some sense decontextualiation, kinda like the depersonalization resuting from dissociation. (off the top of my head these are all the "tion" words I could come up with.)

Ron Eglash — October 6, 2012

I think it would help to consider more varieties before you settle on a name:

1) Muscadine notes "calcuability" and David Z. notes "quantification."

2) The two you cite above (Klout status and Facebook updates) are both cases of commodification.

If you are only concerned with examples in which one or both of the above are happening, that narrows it down. However if you are including something like the Christian "fish" sticker (car bumpers, store windows etc.), that also functions as a technology that makes some social attribute visible in discomforting ways (at least to me), and does not seem strongly tied to commodification or quantification.

Most examples I can think of seem to involve commodification:

There is a long history of status symbols making visible that which was previously obscured -- the alligator on the polo shirt for example.

There was an on-going discussion about whether or not it was pretentious bragging to have an email signature that says "sent from my iphone" http://www.thegeekpub.com/972/sent-from-my-iphone. No quantification here.

Finally I would note that it can be used as critique or resistance: for example a group of anarchists used to sneak up to expensive vehicles and paste on a bumpersticker that read "thousands of people died so that I could own this car."

Sabrina Weiss — October 6, 2012

I liked "The Question Concerning Technology" and found it very useful, even as a launching point for further discussion.

This is really cool, and I have been working on similar types of topics, such as how the use of online technologies exposes unspoken assumptions we held about primacy or idealization of meatspace interactions (using intersectionist perspectives). I'd recommend taking a look at schema theory from psych and at the "Uncanny Valley" effect to give some grist for the mill.

Maybe we could talk more on this? (I'm a colleague of DA Banks)

In Their Words » Cyborgology — October 7, 2012

[...] “is Klout trying to smack a glossy veneer of Science™ onto social ranking?” Follow Nathan on Twitter: @nathanjurgenson [...]

Alexander I. Stingl — October 7, 2012

Well, besides a return to Deleuze/Guattari, Walter Benjamin, Gregory Bateson and Georges Bataille for already existing terminologies on what you describe as a double-bind or dialectics between technological and cultural machines, possibly looking at Lacan respectviely Zizek, I'd suggest reading the more recent stuff by N. Kathryn Hayles, and on matters of what is called 'techno-somatic involvement' reading up on either or all from Bernard Stiegler, Gilbert Simondon (or about him, since little is available in English), Heinz von Foerster, Niklas Luhmann, Ingrid Richardson, Don Ihde, Brian Massumi, Levi Bryant, Erin Manning, and, of course, there is a way of doing this Deleuzean style via DeLanda.

If I had to invent a term, even though I believe enough alternative conceptualizations exist, such as by the few authors I mention, I would, perhaps, take Levi Bryant's 'larval subjects' and turn it upside down: 'larval objectivation'.

Best,

Alex

Ready to be a Brand? » Cyborgology — October 9, 2012

[...] speaks in many ways to the allusive term that Whitney Boesel (@phenatypical) scrambled to come up with last week. Paying to promote a [...]

Brent Graber — October 9, 2012

The first words that came to my mind to describe #1 were "revelation" or "amplification." Those don't get at the specifically negative sense you describe, but I think the key point is the highlighting, not that what's highlighted is a negative trait. As for #2, why not "suppression?" No neologisms needed; you wouldn't even be using words in strange ways.

If you want terminology borrowed from another field you could consider Don Ihde's "magnification" and "reduction." He uses these to talk about the effects of technology on our experience of the world. To my ear those seem pretty close to what you're describing.

Louise — October 12, 2012

Interesting discussion. How about 'elucidation'? (Drawing into the light.) I remember some kind of meme going around trying to equate different social media with the idea of the cardinal sins - e.g. Facebook = vanity, LinkedIn = greed and so on. Another connection between an age-old expression of human vice and new technologies.

Apps, Autonomy, & Anxiety: a Sociological Perspective » Cyborgology — October 13, 2012

[...] week I wrote about a pattern I’ve been seeing, one for which I wanted to create a new term. I’m still working on the terminology issue, but the [...]